жӮЁеҘҪпјҢзҷ»еҪ•еҗҺжүҚиғҪдёӢи®ўеҚ•е“ҰпјҒ

з”ұд№ӢеүҚзҡ„еә”з”ЁйғЁзҪІиҝҮзЁӢдёӯеҸҜзҹҘпјҢеңЁkubernetes зі»з»ҹдёҠйғЁзҪІе®№еҷЁеҢ–еә”з”Ёж—¶йңҖиҰҒдәӢе…ҲжүӢеҠЁзј–еҶҷиө„жәҗй…ҚзҪ®жё…еҚ•ж–Ү件д»Ҙе®ҡд№үиө„жәҗеҜ№иұЎпјҢиҖҢдё”е…¶жҜҸдёҖж¬Ўзҡ„й…ҚзҪ®е®ҡд№үеҹәжң¬дёҠйғҪжҳҜзЎ¬зј–з ҒпјҢеҹәжң¬дёҠж— жі•е®һзҺ°еӨҚз”ЁгҖӮеҜ№дәҺиҫғеӨ§и§„жЁЎзҡ„еә”з”ЁеңәжҷҜпјҢеә”з”ЁзЁӢеәҸзҡ„й…ҚзҪ®пјҢеҲҶеҸ‘пјҢзүҲжң¬жҺ§еҲ¶пјҢжҹҘжүҫпјҢеӣһж»ҡз”ҡиҮіжҳҜжҹҘзңӢйғҪе°ҶжҳҜз”ЁжҲ·зҡ„еҷ©жўҰгҖӮ HelmеҸҜеӨ§еӨ§з®ҖеҢ–еә”з”Ёз®ЎзҗҶзҡ„йҡҫеәҰгҖӮ

з®ҖеҚ•жқҘиҜҙпјҢHelmе°ұжҳҜkubernetesзҡ„еә”з”ЁзЁӢеәҸеҢ…з®ЎзҗҶеҷЁпјҢзұ»дјјдәҺLinuxзі»з»ҹдёҠзҡ„ yum жҲ– apt-get зӯүпјҢеҸҜз”ЁдәҺе®һзҺ°её®еҠ©з”ЁжҲ·жҹҘжүҫпјҢеҲҶдә«еҸҠдҪҝз”Ёkubernetesеә”з”ЁзЁӢеәҸпјҢзӣ®еүҚзҡ„зүҲжң¬з”ұCNCFпјҲMicrosoftпјҢGoogleпјҢBitnami е’Ң Helm зӨҫеҢәпјү з»ҙжҠӨгҖӮе®ғзҡ„ж ёеҝғжү“еҢ…еҠҹиғҪ组件称дёәchartпјҢ еҸҜд»Ҙеё®еҠ©з”ЁжҲ·еҲӣе»әпјҢе®үиЈ…еҸҠеҚҮзә§еӨҚжқӮеә”з”ЁгҖӮ

Helmе°Ҷkubernetesиө„жәҗпјҲDeploymentпјҢserviceжҲ–configmapзӯүпјүжү“еҢ…еҲ°дёҖдёӘchartsдёӯпјҢеҲ¶дҪң并жөӢиҜ•е®ҢжҲҗзҡ„еҗ„дёӘcharts е°ҶдҝқеӯҳеҲ°chartsд»“еә“иҝӣиЎҢеӯҳеӮЁе’ҢеҲҶеҸ‘гҖӮеҸҰеӨ–Helmе®һзҺ°дәҶеҸҜй…ҚзҪ®зҡ„еҸ‘еёғпјҢе®ғж”ҜжҢҒеә”з”Ёй…ҚзҪ®зҡ„зүҲжң¬з®ЎзҗҶпјҢз®ҖеҢ–дәҶkubernetes йғЁзҪІеә”з”Ёзҡ„зүҲжң¬жҺ§еҲ¶пјҢжү“еҢ…пјҢеҸ‘еёғпјҢеҲ йҷӨе’Ңжӣҙж–°ж“ҚдҪңгҖӮHelmжһ¶жһ„组件еҰӮдёӢеӣҫжүҖзӨәпјҡ

еҜ№дёҺHemlжқҘиҜҙпјҢе®ғе…·жңүд»ҘдёӢеҮ дёӘе…ій”®жҰӮеҝөпјҡ

Helmдё»иҰҒз”ұHelmе®ўжҲ·з«ҜпјҢTillerжңҚеҠЎеҷЁе’ҢChartsд»“еә“пјҲRepositoryпјүз»„жҲҗгҖӮHelm жҲҗе‘ҳй—ҙйҖҡдҝЎеӣҫеҰӮдёӢпјҡ

Hemlе®ўжҲ·з«ҜпјҡHelmе®ўжҲ·з«ҜжҳҜе‘Ҫд»ӨиЎҢе®ўжҲ·з«Ҝе·Ҙе…·пјҢйҮҮз”ЁGoиҜӯиЁҖзј–еҶҷпјҢеҹәдәҺgRPCеҚҸи®®дёҺTiller serverдәӨдә’пјҢе®ғдё»иҰҒе®ҢжҲҗеҰӮдёӢд»»еҠЎпјҡ

- жң¬ең° chartsејҖеҸ‘гҖӮ

- з®ЎзҗҶChartsд»“еә“гҖӮ

- дёҺTillerжңҚеҠЎеҷЁдәӨдә’пјҲеҸ‘йҖҒChartsд»Ҙе®үиЈ…пјҢжҹҘиҜўreleaseзҡ„зӣёе…ідҝЎжҒҜд»ҘеҸҠеҚҮзә§жҲ–еҚёиҪҪе·Іжңүзҡ„ReleaseпјүгҖӮ

Tiller serverпјҡTiller serverжҳҜиҝҗиЎҢдёҺkubernetesйӣҶзҫӨд№Ӣдёӯзҡ„е®№еҷЁеҢ–жңҚеҠЎеә”з”ЁпјҢе®ғжҺҘ收жқҘиҮӘHelmе®ўжҲ·з«Ҝзҡ„иҜ·жұӮпјҢ并еңЁеҝ…иҰҒж—¶дёҺkubernetes APi serverиҝӣиЎҢдәӨдә’пјҢе®ғдё»иҰҒе®ҢжҲҗд»ҘдёӢд»»еҠЎпјҡ

- зӣ‘еҗ¬жқҘиҮӘдәҺHelmе®ўжҲ·з«Ҝзҡ„иҜ·жұӮгҖӮ

- еҗҲ并charts е’Ңй…ҚзҪ®д»Ҙжһ„е»әдёҖдёӘReleaseгҖӮ

- еҗ‘kubernetes и®°иҖ…е®үиЈ…Charts并еҜ№зӣёеә”зҡ„ReleaseиҝӣиЎҢи·ҹиёӘгҖӮ

- еҚҮзә§е’ҢеҚёиҪҪChartsгҖӮ

Chartsд»“еә“пјҡд»…еңЁжңүеҲҶеҸ‘йңҖжұӮж—¶пјҢжүҚеә”иҜҘе°ҶеҗҢдёҖеә”з”Ёзҡ„Chartsж–Ү件жү“еҢ…жҲҗеҪ’жЎЈеҺӢзј©ж јејҸжҸҗдәӨеҲ°зү№е®ҡзҡ„chartsд»“еә“гҖӮд»“еә“ж—ўеҸҜд»ҘиҝҗиЎҢдёәе…¬е…ұжүҳguanе№іеҸ°пјҢд№ҹеҸҜд»ҘжҳҜз”ЁжҲ·иҮӘе»әзҡ„жңҚеҠЎеҷЁпјҢд»…дҫӣзү№е®ҡзҡ„з»„з»Үе’ҢдёӘдәәдҪҝз”ЁгҖӮ

е®үиЈ…Helm clientж–№ејҸжңүдёӨз§Қпјҡйў„зј–иҜ‘зҡ„дәҢиҝӣеҲ¶зЁӢеәҸе’Ңжәҗз Ғзј–иҜ‘е®үиЈ…гҖӮжң¬ж–ҮйҮҮз”Ёйў„зј–иҜ‘зҡ„дәҢиҝӣеҲ¶зЁӢеәҸе®үиЈ…ж–№ејҸгҖӮ

1пјүдёӢиҪҪдәҢиҝӣеҲ¶еҢ…пјҢ并е®үиЈ…пјҡ

дәҢиҝӣеҲ¶е®үиЈ…еҢ…дёӢиҪҪең°еқҖпјҡhttps://github.com/helm/helm/releases пјҢеҸҜд»ҘйҖүжӢ©дёҚеҗҢзҡ„зүҲжң¬пјҢдҫӢеҰӮе®үиЈ…2.14.3зүҲжң¬пјҡ

[root@master helm]# wget https://get.helm.sh/helm-v2.14.3-linux-amd64.tar.gz

[root@master helm]# tar zxf helm-v2.14.3-linux-amd64.tar.gz

[root@master helm]# ls linux-amd64/

helm LICENSE README.md tiller

#е°Ҷе…¶дәҢиҝӣеҲ¶е‘Ҫд»ӨпјҲhelmпјүеӨҚеҲ¶жҲ–移еҠЁеҲ°зі»з»ҹPATHзҺҜеўғеҸҳйҮҸжҢҮеҗ‘зҡ„зӣ®еҪ•дёӯ

[root@master helm]# cp linux-amd64/helm /usr/local/bin/

#жҹҘзңӢhelmзүҲжң¬

[root@master helm]# helm version

Client: &version.Version{SemVer:"v2.14.3", GitCommit:"0e7f3b6637f7af8fcfddb3d2941fcc7cbebb0085", GitTreeState:"clean"}

Error: could not find tiller

//жү§иЎҢhelm versionе‘Ҫд»ӨеҸ‘зҺ°helmе®ўжҲ·з«ҜзүҲжң¬дёәv2.14.3пјҢжҸҗзӨәжңҚеҠЎз«ҜtillerиҝҳжңӘе®үиЈ…гҖӮ2пјүе‘Ҫд»ӨиЎҘе…Ё

Helm жңүеҫҲеӨҡеӯҗе‘Ҫд»Өе’ҢеҸӮж•°пјҢдёәдәҶжҸҗй«ҳдҪҝз”Ёе‘Ҫд»ӨиЎҢзҡ„ж•ҲзҺҮпјҢйҖҡеёёе»әи®®е®үиЈ… helm зҡ„ bash е‘Ҫд»ӨиЎҘе…Ёи„ҡжң¬пјҢж–№жі•еҰӮдёӢпјҡ

[root@master helm]# echo "source <(helm completion bash)" >> /root/.bashrc

[root@master helm]# source /root/.bashrc #зҺ°еңЁе°ұеҸҜд»ҘйҖҡиҝҮ Tab й”®иЎҘе…Ё helm еӯҗе‘Ҫд»Өе’ҢеҸӮж•°дәҶпјҡ

[root@master helm]# helm

completion dependency history inspect list repo search template verify

create fetch home install package reset serve test version

delete get init lint plugin rollback status upgrade

[root@master helm]# helm install --

--atomic --name= --timeout=

--ca-file= --namespace= --tls

--cert-file= --name-template= --tls-ca-cert=

--debug --no-crd-hook --tls-cert=

--dep-up --no-hooks --tls-hostname=

--description= --password= --tls-key=

--devel --render-subchart-notes --tls-verify

--dry-run --replace --username=

--home= --repo= --values=

--host= --set= --verify

--key-file= --set-file= --version=

--keyring= --set-string= --wait

--kubeconfig= --tiller-connection-timeout=

--kube-context= --tiller-namespace= TillerжҳҜhelmзҡ„жңҚеҠЎеҷЁз«ҜпјҢдёҖиҲ¬еә”иҜҘиҝҗиЎҢдәҺk8sйӣҶзҫӨд№ӢдёҠпјҢеҰӮжһңk8sејҖеҗҜдәҶRBACзҡ„жҺҲжқғпјҢйӮЈд№Ҳеә”иҜҘеҲӣе»әзӣёе…ізҡ„ServiceAccountжүҚиғҪиҝӣиЎҢе®үиЈ…гҖӮ

1пјүеҲӣе»әеёҰжңүcluster-adminи§’иүІжқғйҷҗзҡ„жңҚеҠЎиҙҰжҲ·

[root@master helm]# vim tiller-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: tiller

namespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: tiller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: tiller

namespace: kube-system[root@master helm]# kubectl apply -f tiller-rbac.yaml

serviceaccount/tiller created

clusterrolebinding.rbac.authorization.k8s.io/tiller created[root@master helm]# kubectl get serviceaccounts -n kube-system | grep tiller

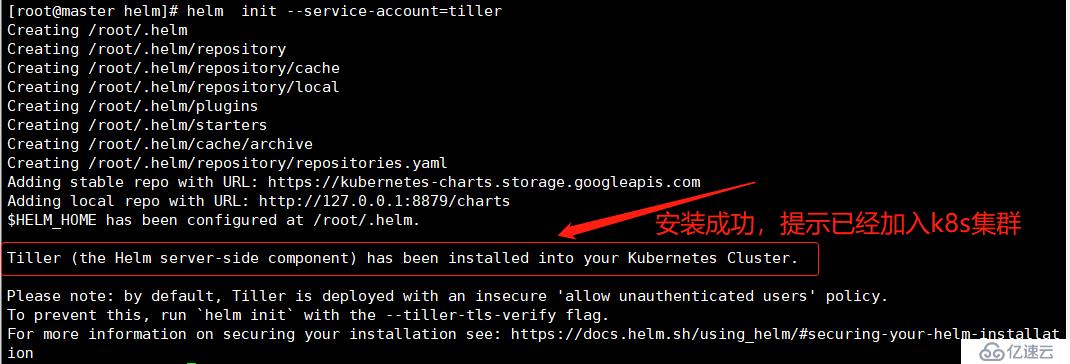

tiller 1 78s2пјүTiller serverзҡ„зҺҜеўғеҲқе§ӢеҢ–пјҲе®үиЈ…tiller serverпјү[root@master helm]# helm init --service-account=tiller #service-accountжҢҮеҗ‘еҲҡеҲҡеҲӣе»әзҡ„жңҚеҠЎиҙҰжҲ·

#жҹҘзңӢTiller serverжҳҜеҗҰжҲҗеҠҹиҝҗиЎҢпјҡ

[root@master helm]# kubectl get pod -n kube-system | grep tiller

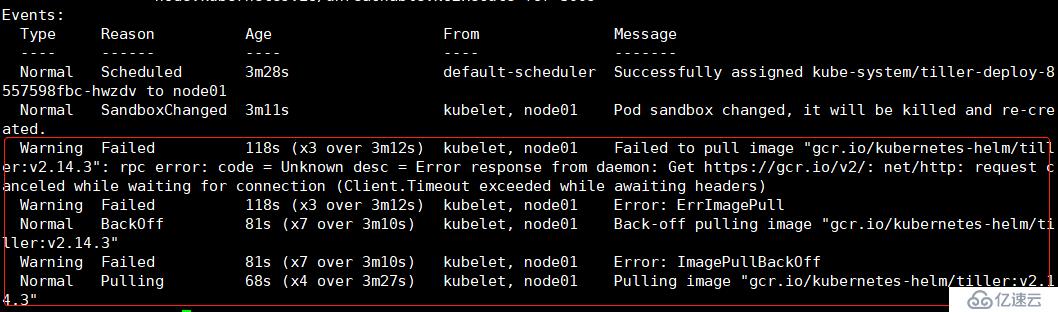

tiller-deploy-8557598fbc-hwzdv 0/1 ErrImagePull 0 2m53s

[root@master helm]# kubectl describe pod -n kube-system tiller-deploy-8557598fbc-hwzdv

#йҖҡиҝҮжҹҘзңӢиҜҰз»ҶдҝЎжҒҜеҸҜд»ҘзңӢеҲ°й•ңеғҸжӢүеҸ–еӨұиҙҘпјҢд»ҘдёәиҜҘй•ңеғҸжҳҜи°·жӯҢзҡ„й•ңеғҸпјҢжүҖд»ҘжҲ‘们йҖҡиҝҮйҳҝйҮҢдә‘й•ңеғҸз«ҷеҺ»дёӢиҪҪпјҢйҖҡиҝҮдёҠйқўзҡ„дәӢ件дҝЎжҒҜдёӯпјҢжҲ‘们еҸҜд»ҘзңӢеҲ°иҜҘTiller serverжҳҜиҝҗиЎҢеңЁnode01иҠӮзӮ№дёҠзҡ„пјҢжүҖд»ҘжҲ‘们еҸӘйңҖиҰҒеңЁnode01дёҠдёӢиҪҪй•ңеғҸпјҡ

[root@node01 ~]# docker pull registry.aliyuncs.com/google_containers/tiller:v2.14.3

[root@node01 ~]# docker tag registry.aliyuncs.com/google_containers/tiller:v2.14.3 gcr.io/kubernetes-helm/tiller:v2.14.3 #йңҖиҰҒйҮҚе‘ҪеҗҚдёәжәҗй•ңеғҸеҗҚ

[root@node01 ~]# docker rmi -f registry.aliyuncs.com/google_containers/tiller:v2.14.3

[root@node01 ~]# docker images | grep tiller

gcr.io/kubernetes-helm/tiller v2.14.3 2d0a693df3ba 6 months ago 94.2MB#й•ңеғҸеҜје…ҘжҲҗеҠҹеҗҺпјҢеҸҜд»ҘзңӢеҲ°tiller serverе·ІжӯЈеёёиҝҗиЎҢпјҡ

[root@master helm]# kubectl get pod -n kube-system | grep tiller

tiller-deploy-8557598fbc-hwzdv 1/1 Running 0 17m#зҺ°еңЁпјҢ жү§иЎҢhelm version е·Із»ҸиғҪеӨҹжҹҘзңӢtiller serverзҡ„зүҲжң¬дҝЎжҒҜдәҶпјҡ

[root@master helm]# helm version

Client: &version.Version{SemVer:"v2.14.3", GitCommit:"0e7f3b6637f7af8fcfddb3d2941fcc7cbebb0085", GitTreeState:"clean"}

Server: &version.Version{SemVer:"v2.14.3", GitCommit:"0e7f3b6637f7af8fcfddb3d2941fcc7cbebb0085", GitTreeState:"clean"}#helm е®үиЈ…жҲҗеҠҹеҗҺпјҢеҸҜд»Ҙжү§иЎҢhelm repo listжҹҘзңӢhelmд»“еә“пјҡ

[root@master helm]# helm repo list

NAME URL

stable https://kubernetes-charts.storage.googleapis.com

local http://127.0.0.1:8879/charts

//Helmе®үиЈ…ж—¶е·Із»Ҹй»ҳи®Өй…ҚзҪ®еҘҪдәҶдёӨдёӘд»“еә“пјҡstableе’ҢlocalгҖӮstableжҳҜе®ҳж–№д»“еә“пјҢlocalжҳҜз”ЁжҲ·еӯҳж”ҫиҮӘе·ұејҖеҸ‘зҡ„chartзҡ„жң¬ең°д»“еә“гҖӮ#з”ұдәҺе®ҳж–№й»ҳи®Өд»“еә“жәҗжҳҜеӣҪеӨ–зҡ„пјҢдёәдәҶж–№дҫҝдҪҝз”ЁпјҢжҲ‘们жҢҮе®ҡдёәеӣҪеҶ…зҡ„helmд»“еә“жәҗпјҡ

[root@master helm]# helm repo add stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts

"stable" has been added to your repositories//еҶҚж¬ЎжҹҘзңӢеҸҜз”ЁзңӢеҲ°еҺҹжңүд»“еә“жәҗе·Із»Ҹиў«иҰҶзӣ–пјҡ

[root@master helm]# helm repo list

NAME URL

stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts

local http://127.0.0.1:8879/charts #жӣҙж”№еҗҺпјҢжҲ‘们жү§иЎҢrepo updateжӣҙж–°дёҖдёӢд»“еә“пјҡ

[root@master helm]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Skip local chart repository

...Successfully got an update from the "stable" chart repository

Update Complete.#жҲ‘们еҸҜжү§иЎҢ helm search жҹҘзңӢеҪ“еүҚеҸҜе®үиЈ…зҡ„ chartпјҢд№ҹеҸҜд»ҘжҹҗдёҖдёӘжңҚеҠЎзҡ„зүҲжң¬дҝЎжҒҜпјҲжҹҘзңӢеҲ°зҡ„жҳҜhelm chartsеҢ…зҡ„зүҲжң¬пјүпјҡ

[root@master helm]# helm search mysql

NAME CHART VERSION APP VERSION DESCRIPTION

stable/mysql 0.3.5 Fast, reliable, scalable, and easy to use open-source rel...

stable/percona 0.3.0 free, fully compatible, enhanced, open source drop-in rep...

stable/percona-xtradb-cluster 0.0.2 5.7.19 free, fully compatible, enhanced, open source drop-in rep...

stable/gcloud-sqlproxy 0.2.3 Google Cloud SQL Proxy

stable/mariadb 2.1.6 10.1.31 Fast, reliable, scalable, and easy to use open-source rel...#дҫӢеҰӮпјҢйҖҡиҝҮд»ҘдёӢе‘Ҫд»ӨжқҘдёӢиҪҪmysqlзҡ„chartsеҢ…пјҡ

[root@master helm]# helm install stable/mysql

#дёӢиҪҪиҝҮзЁӢдёӯпјҢдјҡиҫ“еҮәд»ҘдёӢдҝЎжҒҜ:

NAME: mean-spaniel

LAST DEPLOYED: Sat Feb 15 14:43:39 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

mean-spaniel-mysql Pending 0s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

mean-spaniel-mysql-5868455f75-n8lb6 0/1 Pending 0 0s

==> v1/Secret

NAME TYPE DATA AGE

mean-spaniel-mysql Opaque 2 0s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mean-spaniel-mysql ClusterIP 10.102.92.19 <none> 3306/TCP 0s

==> v1beta1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

mean-spaniel-mysql 0/1 1 0 0s

NOTES:

MySQL can be accessed via port 3306 on the following DNS name from within your cluster:

mean-spaniel-mysql.default.svc.cluster.local

To get your root password run:

MYSQL_ROOT_PASSWORD=$(kubectl get secret --namespace default mean-spaniel-mysql -o jsonpath="{.data.mysql-root-password}" | base64 --decode; echo)

To connect to your database:

1. Run an Ubuntu pod that you can use as a client:

kubectl run -i --tty ubuntu --image=ubuntu:16.04 --restart=Never -- bash -il

2. Install the mysql client:

$ apt-get update && apt-get install mysql-client -y

3. Connect using the mysql cli, then provide your password:

$ mysql -h mean-spaniel-mysql -p

To connect to your database directly from outside the K8s cluster:

MYSQL_HOST=127.0.0.1

MYSQL_PORT=3306

# Execute the following commands to route the connection:

export POD_NAME=$(kubectl get pods --namespace default -l "app=mean-spaniel-mysql" -o jsonpath="{.items[0].metadata.name}")

kubectl port-forward $POD_NAME 3306:3306

mysql -h ${MYSQL_HOST} -P${MYSQL_PORT} -u root -p${MYSQL_ROOT_PASSWORD}иҫ“еҮәдҝЎжҒҜеҲҶдёәдёүдёӘйғЁеҲҶпјҡ

пјҲ1пјүchartжң¬ж¬ЎйғЁзҪІзҡ„жҸҸиҝ°дҝЎжҒҜпјҡ

NAME жҳҜ releaseзҡ„еҗҚеӯ—пјҢеӣ дёәжҲ‘们没用-n еҸӮж•°жҢҮе®ҡпјҢhemlйҡҸжңәз”ҹжҲҗдәҶдёҖдёӘпјҢиҝҷйҮҢжҳҜmean-spanielгҖӮ

NAMESPACE жҳҜ release йғЁзҪІзҡ„namespaceпјҢй»ҳи®ӨжҳҜdefaultпјҢд№ҹеҸҜд»ҘйҖҡиҝҮ--namespace жҢҮе®ҡгҖӮ

STATUS дёәDEPLOYEDпјҢиЎЁзӨәе·Із»Ҹе°ҶchartйғЁзҪІеҲ°йӣҶзҫӨгҖӮ

пјҲ2пјүеҪ“еүҚ releaseеҢ…еҗ«зҡ„иө„жәҗпјҲRESOURCESпјүпјҡ

ServiceпјҢDeploymentпјҢSecretе’ҢPersistentVolumeClaimпјҢе…¶еҗҚеӯ—йғҪжҳҜ

mean-spaniel-mysqlпјҢе‘ҪеҗҚзҡ„ж јејҸдёәвҖңReleaseName-ChartNameвҖқгҖӮ

пјҲ3пјүNOTES йғЁеҲҶжҳҫзӨәзҡ„жҳҜ releaseзҡ„дҪҝз”Ёж–№ејҸгҖӮжҜ”еҰӮеҰӮдҪ•и®ҝй—®ServiceпјҢеҰӮдҪ•иҺ·еҸ–ж•°жҚ®еә“еҜҶз ҒпјҢд»ҘеҸҠеҰӮдҪ•иҝһжҺҘж•°жҚ®еә“зӯүгҖӮ

#жү§иЎҢд»ҘдёӢе‘Ҫд»ӨпјҢжҹҘзңӢе·ІйғЁзҪІзҡ„releaseпјҡ

[root@master helm]# helm list

NAME REVISION UPDATED STATUS CHART APP VERSION NAMESPACE

mean-spaniel 1 Sat Feb 15 14:43:39 2020 DEPLOYED mysql-0.3.5 default #йҖҡиҝҮд»ҘдёӢе‘Ҫд»ӨпјҢжҹҘзңӢreleaseзҡ„зҠ¶жҖҒпјҡ

[root@master helm]# helm status mean-spaniel

йғЁеҲҶеҶ…е®№еҰӮдёӢпјҡ

LAST DEPLOYED: Sat Feb 15 14:43:39 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

mean-spaniel-mysql Pending 26m

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

mean-spaniel-mysql-5868455f75-n8lb6 0/1 Pending 0 26m

==> v1/Secret

NAME TYPE DATA AGE

mean-spaniel-mysql Opaque 2 26m

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mean-spaniel-mysql ClusterIP 10.102.92.19 <none> 3306/TCP 26m

==> v1beta1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

mean-spaniel-mysql 0/1 1 0 26m#еңЁз”ҹдә§зҺҜеўғдёӯпјҢжҲ‘们д№ҹеҸҜд»ҘдҪҝз”Ёkubectl get е’Ңkubectl describeжқҘжҹҘзңӢе®һдҫӢзҡ„еҗ„дёӘеҜ№иұЎпјҢд»Ҙеҝ«йҖҹзҡ„иҝӣиЎҢжҺ’й”ҷгҖӮдҫӢеҰӮжҹҘзңӢеҪ“еүҚpodпјҡ

[root@master helm]# kubectl get pod mean-spaniel-mysql-5868455f75-n8lb6

NAME READY STATUS RESTARTS AGE

mean-spaniel-mysql-5868455f75-n8lb6 0/1 Pending 0 31m

[root@master helm]# kubectl describe pod mean-spaniel-mysql-5868455f75-n8lb6

йҖҡиҝҮpodзҡ„дәӢ件дҝЎжҒҜдёӯпјҢеҫ—зҹҘпјҢеӣ дёәжҲ‘们иҝҳжІЎжңүеҮҶеӨҮpvпјҢжүҖд»ҘеҪ“еүҚе®һдҫӢиҝҳдёҚеҸҜз”ЁгҖӮ

#еҰӮжһңжғіиҰҒеҲ йҷӨе·ІйғЁзҪІзҡ„releaseпјҢеҸҜжү§иЎҢhelm delete е‘Ҫд»ӨпјҲжіЁж„Ҹпјҡеҝ…йЎ»еҠ дёҠ--purgeеҲ йҷӨзј“еӯҳпјҢжүҚиғҪеӨҹеҪ»еә•зҡ„еҲ йҷӨпјҡ

[root@master helm]# helm delete mean-spaniel --purge

release "mean-spaniel" deletedжҲ‘们зҹҘйҒ“ChartsжҳҜHelmдҪҝз”Ёзҡ„kubernetesзЁӢеәҸеҢ…жү“еҢ…ж јејҸпјҢдёҖдёӘchartsе°ұжҳҜдёҖдёӘжҸҸиҝ°дёҖз»„kubernetesиө„жәҗзҡ„ж–Ү件зҡ„йӣҶеҗҲгҖӮ

дёҖдёӘеҚ•зӢ¬зҡ„chartsж—ўиғҪйғЁзҪІз®ҖеҚ•еә”з”ЁпјҢдҫӢеҰӮдёҖдёӘmemcachedжңҚеҠЎпјҢд№ҹиғҪйғЁзҪІеӨҚжқӮзҡ„еә”з”ЁпјҢжҜ”еҰӮеҢ…еҗ«HTTP ServersпјҢDatabaseпјҢж¶ҲжҒҜдёӯй—ҙ件пјҢcacheзӯүгҖӮ

chart е°Ҷиҝҷдәӣж–Ү件ж”ҫзҪ®еңЁйў„е®ҡд№үзҡ„зӣ®еҪ•з»“жһ„дёӯпјҢйҖҡеёёж•ҙдёӘchartиў«жү“еҢ…жҲҗtarеҢ…пјҢиҖҢдё”ж ҮжіЁдёҠзүҲжң¬дҝЎжҒҜпјҢдҫҝдәҺHelmйғЁзҪІгҖӮдёӢйқўжҲ‘们е°ҶиҜҰз»Ҷи®Ёи®әchartзҡ„зӣ®еҪ•з»“жһ„д»ҘеҸҠеҢ…еҗ«зҡ„еҗ„зұ»ж–Ү件гҖӮ

#дҫӢеҰӮпјҢд№ӢеүҚе®үиЈ…зҡ„mysql chartпјҢдёҖж—Ұе®үиЈ…дәҶжҹҗдёӘchartпјҢжҲ‘们е°ұеҸҜд»ҘеңЁ

~/.helm/cache/archive дёӯжүҫеҲ° chart зҡ„ tar еҢ…гҖӮ

[root@master helm]# ls ~/.helm/cache/archive/

mysql-0.3.5.tgz#и§ЈеҺӢеҗҺпјҢmysql chart зӣ®еҪ•з»“жһ„еҰӮдёӢпјҡ

[root@master helm]# tree -C mysql/

mysql/

в”ңв”Җв”Җ Chart.yaml

в”ңв”Җв”Җ README.md

в”ңв”Җв”Җ templates

в”ӮВ В в”ңв”Җв”Җ configmap.yaml

в”ӮВ В в”ңв”Җв”Җ deployment.yaml

в”ӮВ В в”ңв”Җв”Җ _helpers.tpl

в”ӮВ В в”ңв”Җв”Җ NOTES.txt

в”ӮВ В в”ңв”Җв”Җ pvc.yaml

в”ӮВ В в”ңв”Җв”Җ secrets.yaml

в”ӮВ В в””в”Җв”Җ svc.yaml

в””в”Җв”Җ values.yaml

1 directory, 10 filesеҢ…еҗ«еҰӮдёӢеҶ…е®№пјҡ

пјҲ1пјүchart.yamlпјҡYAMLж–Ү件пјҢжҸҸиҝ°chartзҡ„жҰӮиҰҒдҝЎжҒҜгҖӮ

description: Fast, reliable, scalable, and easy to use open-source relational database

system.

engine: gotpl

home: https://www.mysql.com/

icon: https://www.mysql.com/common/logos/logo-mysql-170x115.png

keywords:

- mysql

- database

- sql

maintainers:

- email: viglesias@google.com

name: Vic Iglesias

name: mysql

sources:

- https://github.com/kubernetes/charts

- https://github.com/docker-library/mysql

version: 0.3.5е…¶дёӯпјҢnameе’ҢversionжҳҜеҝ…еЎ«йЎ№пјҢе…¶д»–йғҪжҳҜеҸҜйҖүзҡ„гҖӮ

пјҲ2пјүREADME.mdпјҡMarkdown ж јејҸзҡ„README ж–Ү件пјҢд№ҹе°ұжҳҜchartзҡ„дҪҝз”Ёж–ҮжЎЈпјҢжӯӨж–Ү件еҸҜйҖүгҖӮ

пјҲ3пјүvalues.yaml пјҡchartж”ҜжҢҒеңЁе®үиЈ…зҡ„ж—¶ж №жҚ®еҸӮж•°иҝӣиЎҢе®ҡеҲ¶еҢ–й…ҚзҪ®пјҢиҖҢvalues.yaml еҲҷжҸҗдҫӣдәҶиҝҷдәӣй…ҚзҪ®еҸӮж•°зҡ„й»ҳи®ӨеҖјгҖӮ

пјҲ4пјүtemplates зӣ®еҪ• пјҡеҗ„зұ»kubernetesиө„жәҗзҡ„й…ҚзҪ®жЁЎжқҝйғҪж”ҫзҪ®еңЁиҝҷйҮҢгҖӮHelmдјҡе°Ҷvalues.yaml дёӯзҡ„еҸӮж•°еҖјжіЁе…ҘеҲ°жЁЎжқҝдёӯз”ҹжҲҗж ҮеҮҶзҡ„YAMLй…ҚзҪ®ж–Ү件гҖӮ

жЁЎжқҝжҳҜchartжңҖйҮҚиҰҒзҡ„йғЁеҲҶпјҢд№ҹжҳҜhelmжңҖејәеӨ§ең°ж–№гҖӮжЁЎжқҝеўһеҠ дәҶеә”з”ЁйғЁзҪІзҡ„зҒөжҙ»жҖ§пјҢиғҪеӨҹйҖӮз”ЁдёҚеҗҢзҡ„зҺҜеўғгҖӮ

еңЁе®үиЈ…д№ӢеүҚпјҢжҲ‘们еҸҜд»Ҙе…Ҳжү§иЎҢhelm inspect values жҹҘзңӢ mysql chartзҡ„дҪҝз”Ёж–№жі•пјҡ

[root@master ~]# helm inspect values stable/mysqlиҫ“еҮәзҡ„е®һйҷ…дёҠжҳҜvalues.yamlзҡ„еҶ…е®№гҖӮйҳ…иҜ»жіЁйҮҠе°ұеҸҜд»ҘзҹҘйҒ“mysql chartж”ҜжҢҒе“ӘдәӣеҸӮж•°пјҢе®үиЈ…д№ӢеүҚйңҖиҰҒеҒҡе“ӘдәӣеҮҶеӨҮпјҢе…¶дёӯжңүдёҖйғЁеҲҶжҳҜе…ідәҺеӯҳеӮЁзҡ„пјҡ

## Persist data to a persistent volume

persistence:

enabled: true

## database data Persistent Volume Storage Class

## If defined, storageClassName: <storageClass>

## If set to "-", storageClassName: "", which disables dynamic provisioning

## If undefined (the default) or set to null, no storageClassName spec is

## set, choosing the default provisioner. (gp2 on AWS, standard on

## GKE, AWS & OpenStack)

##

# storageClass: "-"

accessMode: ReadWriteOnce

size: 8Gichartе®ҡд№үдәҶдёҖдёӘpvcпјҢз”іиҜ·8Gзҡ„pvпјҢеӣ дёәжҳҜжөӢиҜ•зҺҜеўғпјҢжүҖжҲ‘们еҫ—йў„е…ҲеҲӣе»әеҘҪзӣёеә”зҡ„pvгҖӮ

1пјүеҲӣе»әpvпјҡ

//йҰ–е…Ҳжҗӯе»әnfsпјҲmaster дёәnfsжңҚеҠЎеҷЁпјүпјҡ

[root@master helm]# yum -y install nfs-utils

[root@master helm]# vim /etc/exports

/nfsdata/mysql *(rw,sync,no_root_squash)

[root@master helm]# mkdir -p /nfsdata/mysql

[root@master helm]# systemctl start rpcbind

[root@master helm]# systemctl start nfs-server

[root@master helm]# systemctl enable nfs-server

[root@master mysql]# showmount -e

Export list for master:

/nfsdata/mysql *

//еҲӣе»әmysql-pvпјҢй…ҚзҪ®еҶ…е®№еҰӮдёӢпјҡ

apiVersion: v1

kind: PersistentVolume

metadata:

name: mysql-pv

spec:

accessModes:

- ReadWriteOnce

capacity:

storage: 8Gi

persistentVolumeReclaimPolicy: Retain

nfs:

path: /nfsdata/mysql

server: 172.16.1.30

[root@master ~]# kubectl apply -f mysql-pv.yaml

persistentvolume/mysql-pv created#зЎ®дҝқpvиғҪеӨҹжӯЈеёёдҪҝз”Ёпјҡ

[root@master helm]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

mysql-pv 8Gi RWO Retain Available 2пјүе®үиЈ…mysql chart

//дёӢиҪҪmysql пјҲи®ҫзҪ®mysql rootз”ЁжҲ·зҡ„еҜҶз ҒпјҢ并且жҢҮе®ҡreleaseзҡ„еҗҚз§°пјү

#еҸҜд»ҘйҖҡиҝҮ--setзӣҙжҺҘдј е…ҘеҸӮж•°еҖјпјҡ

[root@master helm]# helm install stable/mysql --set mysqlRootPassword=123.com -n test-mysql//жҹҘзңӢе·Іе®үиЈ…зҡ„releaseпјҡ

[root@master helm]# helm list

NAME REVISION UPDATED STATUS CHART APP VERSION NAMESPACE

test-mysql 1 Sun Feb 16 12:39:57 2020 DEPLOYED mysql-0.3.5 default #жҹҘзңӢreleaseзҡ„зҠ¶жҖҒпјҡ

[root@master helm]# helm status test-mysql

LAST DEPLOYED: Mon Feb 17 11:51:38 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

test-mysql-mysql Bound mysql-pv 8Gi RWO 23m

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

test-mysql-mysql-dfb9b6944-f6pgs 1/1 Running 0 23m

==> v1/Secret

NAME TYPE DATA AGE

test-mysql-mysql Opaque 2 23m

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

test-mysql-mysql ClusterIP 10.103.220.95 <none> 3306/TCP 23m

==> v1beta1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

test-mysql-mysql 1/1 1 1 23m

NOTES:

MySQL can be accessed via port 3306 on the following DNS name from within your cluster:

test-mysql-mysql.default.svc.cluster.local

To get your root password run:

MYSQL_ROOT_PASSWORD=$(kubectl get secret --namespace default test-mysql-mysql -o jsonpath="{.data.mysql-root-password}" | base64 --decode; echo)

To connect to your database:

1. Run an Ubuntu pod that you can use as a client:

kubectl run -i --tty ubuntu --image=ubuntu:16.04 --restart=Never -- bash -il

2. Install the mysql client:

$ apt-get update && apt-get install mysql-client -y

3. Connect using the mysql cli, then provide your password:

$ mysql -h test-mysql-mysql -p

To connect to your database directly from outside the K8s cluster:

MYSQL_HOST=127.0.0.1

MYSQL_PORT=3306

# Execute the following commands to route the connection:

export POD_NAME=$(kubectl get pods --namespace default -l "app=test-mysql-mysql" -o jsonpath="{.items[0].metadata.name}")

kubectl port-forward $POD_NAME 3306:3306

mysql -h ${MYSQL_HOST} -P${MYSQL_PORT} -u root -p${MYSQL_ROOT_PASSWORD}

еҸҜд»ҘзңӢеҲ°pvзҡ„зҠ¶жҖҒдёәBoundпјҢ并且podе·ІжӯЈеёёиҝҗиЎҢгҖӮ

жіЁж„ҸпјҡеҰӮжһңpodжІЎжңүжӯЈеёёиҝҗиЎҢпјҢеҸҜд»ҘжҹҘзңӢpvжҳҜеҗҰз»‘е®ҡжҲҗеҠҹпјҲзҠ¶жҖҒзЎ®дҝқдёәAvailableпјүпјҢеҰӮжһңpvжІЎжңүй—®йўҳзҡ„иҜқпјҢйӮЈе°ұжҳҜй•ңеғҸиҝҳжІЎжңүжӢүеҸ–жҲҗеҠҹпјҲеӣ дёәmysqlй•ңеғҸжҜ”иҫғеӨ§пјҢжүҖд»ҘиҠұиҙ№ж—¶й—ҙиҫғй•ҝгҖӮпјү

3пјүжөӢиҜ•зҷ»еҪ•mysql

#жіЁж„ҸпјҡеҰӮжһңжҲ‘们еңЁдёҚзҹҘйҒ“mysql rootз”ЁжҲ·еҜҶз Ғзҡ„жғ…еҶөдёӢпјҢеҸҜд»ҘйҖҡиҝҮд»ҘдёӢж–№ејҸиҝӣиЎҢиҺ·еҸ–пјҡпјҲе…¶е®һеңЁжү§иЎҢhelm statusе‘Ҫд»Өиҫ“еҮәзҡ„дҝЎжҒҜдёӯпјҢе·Із»Ҹе‘ҠиҜүжҲ‘们дәҶmysqlзҡ„еҗ„з§ҚдәӢйЎ№пјү

[root@master helm]# helm status test-mysql

#еҶ…е®№еңЁNOTESйғЁеҲҶпјҡ

NOTES:

MySQL can be accessed via port 3306 on the following DNS name from within your cluster:

test-mysql-mysql.default.svc.cluster.local

To get your root password run:

MYSQL_ROOT_PASSWORD=$(kubectl get secret --namespace default test-mysql-mysql -o jsonpath="{.data.mysql-root-password}" | base64 --decode; echo)

To connect to your database:

1. Run an Ubuntu pod that you can use as a client:

kubectl run -i --tty ubuntu --image=ubuntu:16.04 --restart=Never -- bash -il

2. Install the mysql client:

$ apt-get update && apt-get install mysql-client -y

3. Connect using the mysql cli, then provide your password:

$ mysql -h test-mysql-mysql -p

To connect to your database directly from outside the K8s cluster:

MYSQL_HOST=127.0.0.1

MYSQL_PORT=3306

# Execute the following commands to route the connection:

export POD_NAME=$(kubectl get pods --namespace default -l "app=test-mysql-mysql" -o jsonpath="{.items[0].metadata.name}")

kubectl port-forward $POD_NAME 3306:3306

mysql -h ${MYSQL_HOST} -P${MYSQL_PORT} -u root -p${MYSQL_ROOT_PASSWORD}#жү§иЎҢвҖқTo get your root password run:вҖңдёӯе‘ҠиҜүжҲ‘们зҡ„еҶ…е®№пјҡ

[root@master helm]# kubectl get secret --namespace default test-mysql-mysql -o jsonpath="{.data.mysql-root-password}" | base64 --decode; echo

123.com #еҫ—еҲ°mysql rootеҜҶз Ғдёә123.com//жңүдәҶеҜҶз ҒпјҢжөӢиҜ•зҷ»йҷҶmysqlж•°жҚ®еә“пјҡ

[root@master helm]# kubectl exec -it test-mysql-mysql-dfb9b6944-f6pgs -- mysql -uroot -p123.com

mysql: [Warning] Using a password on the command line interface can be insecure.

Welcome to the MySQL monitor. Commands end with ; or \g.

Your MySQL connection id is 222

Server version: 5.7.14 MySQL Community Server (GPL)

Copyright (c) 2000, 2016, Oracle and/or its affiliates. All rights reserved.

Oracle is a registered trademark of Oracle Corporation and/or its

affiliates. Other names may be trademarks of their respective

owners.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

mysql> \s

--------------

mysql Ver 14.14 Distrib 5.7.14, for Linux (x86_64) using EditLine wrapper

Connection id: 222

Current database:

Current user: root@localhost

SSL: Not in use

Current pager: stdout

Using outfile: ''

Using delimiter: ;

Server version: 5.7.14 MySQL Community Server (GPL)

Protocol version: 10

Connection: Localhost via UNIX socket

Server characterset: latin1

Db characterset: latin1

Client characterset: latin1

Conn. characterset: latin1

UNIX socket: /var/run/mysqld/mysqld.sock

Uptime: 20 min 4 sec

Threads: 1 Questions: 486 Slow queries: 0 Opens: 109 Flush tables: 1 Open tables: 102 Queries per second avg: 0.403

--------------1пјүеҚҮзә§ж“ҚдҪңпјҡ

#е°ұд»ҘдёҠйқўйғЁзҪІзҡ„mysqlдёәдҫӢпјҢиҝӣиЎҢзүҲжң¬еҚҮзә§пјҡ

//жҹҘзңӢеҪ“еүҚmysqlзүҲжң¬пјҡ

[root@master helm]# kubectl get deployments. -o wide test-mysql-mysql

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

test-mysql-mysql 1/1 1 1 63m test-mysql-mysql mysql:5.7.14 app=test-mysql-mysql#жҜ”еҰӮпјҢе°ҶеҪ“еүҚmysqlзүҲжң¬еҚҮзә§дёә5.7.15зүҲжң¬пјҡ

[root@master helm]# helm upgrade --set imageTag=5.7.15 test-mysql stable/mysql #йҖҡиҝҮ--setеҸӮж•°иҝӣиЎҢжҢҮе®ҡпјҢеҗҺйқўи·ҹдёҠreleaseеҗҚз§°е’ҢreleaseеҚіеҸҜ#зӯүеҫ…дёҖдәӣж—¶й—ҙпјҲе°ҶйҮҚж–°жӢүеҸ–ж–°зҡ„й•ңеғҸпјҢ并з”ҹжҲҗж–°зҡ„podпјүпјҢеҚҮзә§жҲҗеҠҹпјҡ

[root@master helm]# kubectl get deployments. test-mysql-mysql -o wide

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

test-mysql-mysql 1/1 1 1 55m test-mysql-mysql mysql:5.7.15 app=test-mysql-mysql//еҸҜд»ҘйҖҡиҝҮhelm listжҹҘзңӢеҪ“еүҚreleaseзҡ„versionпјҡ

[root@master helm]# helm list #еҪ“еүҚзүҲжң¬дёә2зүҲжң¬

NAME REVISION UPDATED STATUS CHART APP VERSION NAMESPACE

test-mysql 2 Mon Feb 17 12:38:24 2020 DEPLOYED mysql-0.3.5 default 2пјүеӣһж»ҡж“ҚдҪңпјҡ

йҖҡиҝҮhelm history еҸҜд»ҘжҹҘзңӢ release жүҖжңүзҡ„зүҲжң¬пјҡ

[root@master helm]# helm history test-mysql

REVISION UPDATED STATUS CHART DESCRIPTION

1 Mon Feb 17 11:51:38 2020 SUPERSEDED mysql-0.3.5 Install complete

2 Mon Feb 17 12:38:24 2020 DEPLOYED mysql-0.3.5 Upgrade complete#жҜ”еҰӮпјҢеҪ“еүҚжү§иЎҢhelm rollbackе°Ҷmysqlеӣһж»ҡеҲ°зүҲжң¬1пјҡ

[root@master helm]# helm rollback test-mysql 1

Rollback was a success.#жҹҘзңӢзүҲжң¬жҳҜеҗҰеӣһж»ҡжҲҗеҠҹпјҡ

[root@master helm]# kubectl get deployments. -o wide test-mysql-mysql

NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

test-mysql-mysql 1/1 1 1 63m test-mysql-mysql mysql:5.7.14 app=test-mysql-mysql

//еҸҜд»ҘзңӢеҲ°зүҲжң¬еӣһж»ҡдёә5.7.14зүҲжң¬#еҶҚж¬ЎжҹҘзңӢпјҢеҸ‘зҺ°еҪ“еүҚrelease revisionзҡ„еҖјдёә3пјҲиЎЁзӨәдёә第дёүж¬Ўзҡ„дёҖдёӘдҝ®и®ўзүҲпјү

[root@master helm]# helm list

NAME REVISION UPDATED STATUS CHART APP VERSION NAMESPACE

test-mysql 3 Mon Feb 17 12:54:00 2020 DEPLOYED mysql-0.3.5 default еңЁе®һи·өйғЁзҪІmysqlзҡ„иҝҮзЁӢдёӯпјҢжүӢеҠЁеҲӣе»әpvжҳҜйқһеёёзҡ„дёҚж–№дҫҝзҡ„пјҢеңЁз”ҹдә§зҺҜеўғдёӯпјҢжңүеҫҲеӨҡзҡ„еә”з”ЁйңҖиҰҒе®һзҺ°йғЁзҪІпјҢжүҖд»ҘжҲ‘们еҸҜд»ҘйҖҡиҝҮStorageClassжқҘдёәжҲ‘们жҸҗдҫӣpvгҖӮе…ідәҺSCзҡ„иҜҰз»ҶеҶ…е®№пјҢеҸӮиҖғеҚҡж–Үk8sд№ӢStorageClass

1пјүйғЁзҪІnfs serverпјҡ

[root@master ~]# yum -y install nfs-utils

[root@master ~]# vim /etc/exports

/nfsdata/SC *(rw,sync,no_root_squash)

[root@master ~]# mkdir -p /nfsdata/SC

[root@master ~]# systemctl restart rpcbind

[root@master ~]# systemctl restart nfs-server

[root@master ~]# showmount -e 172.16.1.30

Export list for 172.16.1.30:

/nfsdata/SC *2пјүеҲӣе»әrbacжқғйҷҗпјҡ

[root@master helm]# vim rbac-rolebind.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: nfs-provisioner

namespace: default

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: nfs-provisioner-runner

namespace: default

rules:

- apiGroups: [""]

resources: ["persistentvolumes"]

verbs: ["get", "list", "watch", "create", "delete"]

- apiGroups: [""]

resources: ["persistentvolumeclaims"]

verbs: ["get", "list", "watch", "update"]

- apiGroups: ["storage.k8s.io"]

resources: ["storageclasses"]

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources: ["events"]

verbs: ["watch", "create", "update", "patch"]

- apiGroups: [""]

resources: ["services", "endpoints"]

verbs: ["get","create","list", "watch","update"]

- apiGroups: ["extensions"]

resources: ["podsecuritypolicies"]

resourceNames: ["nfs-provisioner"]

verbs: ["use"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: run-nfs-provisioner

subjects:

- kind: ServiceAccount

name: nfs-provisioner

namespace: default

roleRef:

kind: ClusterRole

name: nfs-provisioner-runner

apiGroup: rbac.authorization.k8s.io[root@master helm]# kubectl apply -f rbac-rolebind.yaml

serviceaccount/nfs-provisioner created

clusterrole.rbac.authorization.k8s.io/nfs-provisioner-runner created

clusterrolebinding.rbac.authorization.k8s.io/run-nfs-provisioner created3пјүеҲӣе»әnfsзҡ„Deploymentпјҡ

[root@master helm]# vim nfs-deployment.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nfs-client-provisioner

namespace: default

spec:

replicas: 1

strategy:

type: Recreate

template:

metadata:

labels:

app: nfs-client-provisioner

spec:

serviceAccount: nfs-provisioner

containers:

- name: nfs-client-provisioner

image: registry.cn-hangzhou.aliyuncs.com/open-ali/nfs-client-provisioner

volumeMounts:

- name: nfs-client-root

mountPath: /persistentvolumes

env:

- name: PROVISIONER_NAME

value: nfs-deploy

- name: NFS_SERVER

value: 172.16.1.30

- name: NFS_PATH

value: /nfsdata/SC

volumes:

- name: nfs-client-root

nfs:

server: 172.16.1.30

path: /nfsdata/SC//еҜје…Ҙnfs-client-provisionerй•ңеғҸпјҲйӣҶзҫӨдёӯзҡ„жҜҸдёӘиҠӮзӮ№йғҪйңҖеҜје…ҘпјҢеҢ…жӢ¬masterпјү

[root@master helm]# docker load --input nfs-client-provisioner.tar [root@master helm]# kubectl apply -f nfs-deployment.yaml

deployment.extensions/nfs-client-provisioner created//зЎ®дҝқpodжӯЈеёёиҝҗиЎҢпјҡ

[root@master helm]# kubectl get pod nfs-client-provisioner-958547f7d-95jkg

NAME READY STATUS RESTARTS AGE

nfs-client-provisioner-958547f7d-95jkg 1/1 Running 0 42s4пјүеҲӣе»әstroage classпјҡ

[root@master sc]# vim test-sc.yaml

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: statefu-nfs

namespace: default

provisioner: nfs-deploy

reclaimPolicy: Retain[root@master helm]# kubectl apply -f test-sc.yaml

storageclass.storage.k8s.io/statefu-nfs created

[root@master helm]# kubectl get sc

NAME PROVISIONER AGE

statefu-nfs nfs-deploy 3m1s5пјүдёәreleaseз”іиҜ·pv

йҖҡиҝҮдҝ®ж”№release chartзӣ®еҪ•дёӢзҡ„values.yamlж–Ү件пјҢvaluesж–Ү件еҸҜд»ҘйҖҡиҝҮи§ЈеҺӢrelease chartеҢ…иҺ·еҫ—пјҡ

[root@master helm]# tar zxf ~/.helm/cache/archive/mysql-0.3.5.tgz #дҫӢеҰӮйғЁзҪІmysql

[root@master helm]# cd mysql/

[root@master mysql]# ls

Chart.yaml README.md templates values.yaml

[root@master mysql]# vim values.yaml

#дҝ®ж”№еҶ…е®№еҰӮдёӢпјҡ

6пјүдёӢиҪҪmysql chart

#жіЁж„ҸпјҢдёӢиҪҪж–№ејҸдёәйҖҡиҝҮchartжң¬ең°зӣ®еҪ•иҝӣиЎҢе®үиЈ…пјҲеҗҺйқўдјҡи®ІеҲ°пјүпјҡ

[root@master helm]# helm install mysql/ -n new-mysql #жҹҘзңӢrelease зҠ¶жҖҒпјҡ

[root@master helm]# helm status new-mysql

йғЁеҲҶдҝЎжҒҜеҰӮдёӢпјҡ

LAST DEPLOYED: Mon Feb 17 13:38:09 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/PersistentVolumeClaim

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

new-mysql-mysql Bound pvc-6a4686cc-fb67-4577-8c6d-848a0ae800b5 5Gi RWO statefu-nfs 41s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

new-mysql-mysql-6cf95546fb-fqg54 1/1 Running 0 41s

==> v1/Secret

NAME TYPE DATA AGE

new-mysql-mysql Opaque 2 41s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

new-mysql-mysql ClusterIP 10.108.202.123 <none> 3306/TCP 41s

==> v1beta1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

new-mysql-mysql 1/1 1 1 41sеҸҜд»ҘзңӢеҲ°pvcпјҢpodпјҢserviceпјҢdeploymentиө„жәҗе·ІжӯЈеёёиҝҗиЎҢпјҢдё”зңӢеҲ°pvcжҳҜйҖҡиҝҮеҗ‘stroageclassеҺ»иҺ·еҸ–зҡ„пјҲзҠ¶жҖҒе·ІдёәBoundпјүгҖӮ

kubernetes з»ҷжҲ‘们жҸҗдҫӣдәҶеӨ§йҮҸе®ҳж–№chartпјҢдёҚиҝҮиҰҒйғЁзҪІеҫ®жңҚеҠЎеә”з”ЁпјҢиҝҳжҳҜйңҖиҰҒејҖеҸ‘иҮӘе·ұзҡ„chartгҖӮдҪҶе®ғд»…иғҪз”ЁдәҺжң¬ең°и®ҝй—®пјҢеҪ“然пјҢз”ЁжҲ·д№ҹеҸҜд»ҘйҖҡиҝҮ helm packageе‘Ҫд»Өе°Ҷе…¶жү“еҢ…дёәtarж јејҸеҗҺеҲҶдә«з»ҷеӣўйҳҹжҲ–иҖ…зӨҫеҢәгҖӮ

еңЁеҲӣе»әиҮӘе®ҡд№үchartд№ӢеүҚпјҢжҲ‘们е…ҲжқҘдәҶи§Јhelmзҡ„еҮ з§Қе®үиЈ…ж–№жі•пјҢHelmж”ҜжҢҒ4з§Қе®үиЈ…ж–№жі•пјҡ

е®үиЈ…д»“еә“дёӯзҡ„ chartпјҢдҫӢеҰӮпјҡhelm install stable/nginx

йҖҡиҝҮ tar еҢ…е®үиЈ…пјҢдҫӢеҰӮпјҡhelm install ./nginx-1.2.3.tgz

йҖҡиҝҮ chart жң¬ең°зӣ®еҪ•е®үиЈ…пјҢдҫӢеҰӮпјҡhelm install ./nginx

- йҖҡиҝҮ URL е®үиЈ…пјҢдҫӢеҰӮпјҡhelm install https://example.com/charts/nginx-1.2.3.tgz

1пјүеҲӣе»әиҮӘе®ҡд№үзҡ„chart

[root@master ~]# helm create mychart

Creating mychart

[root@master ~]# tree mychart/

mychart/

в”ңв”Җв”Җ charts

в”ңв”Җв”Җ Chart.yaml

в”ңв”Җв”Җ templates

в”ӮВ В в”ңв”Җв”Җ deployment.yaml

в”ӮВ В в”ңв”Җв”Җ _helpers.tpl

в”ӮВ В в”ңв”Җв”Җ ingress.yaml

в”ӮВ В в”ңв”Җв”Җ NOTES.txt

в”ӮВ В в”ңв”Җв”Җ service.yaml

в”ӮВ В в””в”Җв”Җ tests

в”ӮВ В в””в”Җв”Җ test-connection.yaml

в””в”Җв”Җ values.yaml

3 directories, 8 filesHelm дјҡеё®еҠ©жҲ‘们еҲӣе»әзӣ®еҪ•пјҲmychartпјүпјҢ并з”ҹжҲҗеҗ„зұ»chartж–Ү件пјҢиҝҷж ·жҲ‘们е°ұеҸҜд»ҘеңЁжӯӨеҹәзЎҖдёҠејҖеҸ‘иҮӘе·ұзҡ„chartгҖӮ

2пјүдҪҝз”ЁиҮӘе·ұејҖеҸ‘зҡ„chartпјҢз®ҖеҚ•йғЁзҪІnginxжңҚеҠЎ

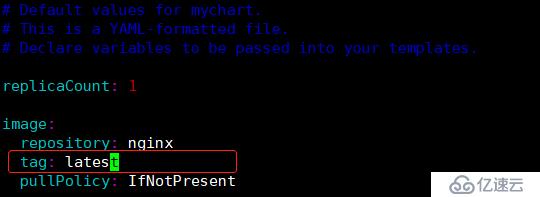

еҪ“жҲ‘们еҲӣе»әе®ҢchartеҗҺпјҢжҹҘзңӢй»ҳи®Өз”ҹжҲҗзҡ„values.yamlж–Ү件пјҡ

[root@master ~]# cat mychart/values.yaml

# Default values for mychart.

# This is a YAML-formatted file.

# Declare variables to be passed into your templates.

replicaCount: 1

image:

repository: nginx

tag: stable

pullPolicy: IfNotPresent

imagePullSecrets: []

nameOverride: ""

fullnameOverride: ""

service:

type: ClusterIP

port: 80

ingress:

enabled: false

annotations: {}

# kubernetes.io/ingress.class: nginx

# kubernetes.io/tls-acme: "true"

hosts:

- host: chart-example.local

paths: []

tls: []

# - secretName: chart-example-tls

# hosts:

# - chart-example.local

resources: {}

# We usually recommend not to specify default resources and to leave this as a conscious

# choice for the user. This also increases chances charts run on environments with little

# resources, such as Minikube. If you do want to specify resources, uncomment the following

# lines, adjust them as necessary, and remove the curly braces after 'resources:'.

# limits:

# cpu: 100m

# memory: 128Mi

# requests:

# cpu: 100m

# memory: 128Mi

nodeSelector: {}

tolerations: []

affinity: {}еҸҜд»ҘзңӢеҲ°йғЁзҪІй•ңеғҸй»ҳи®ӨжҳҜnginxпјҢдҪҶжҳҜе…¶ж ҮзӯҫпјҲtagпјүдёәжөӢиҜ•зүҲжң¬пјҲstableпјүпјҢжүҖд»ҘжҲ‘д»¬ж— жі•зӣҙжҺҘе®үиЈ…releaseгҖӮ

#зӣҙжҺҘдҝ®ж”№valuesж–Ү件пјҲдҝ®ж”№tagдёәеҸҜдҪҝз”Ёзҡ„зүҲжң¬пјүпјҡ

[root@master ~]# vim mychart/values.yaml

#е®үиЈ…releaseпјҡ

[root@master ~]# helm install mychart/ -n mynginx#жҹҘзңӢreleaseзҠ¶жҖҒпјҡ

[root@master ~]# helm status mynginx

LAST DEPLOYED: Mon Feb 17 15:34:10 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

mynginx-mychart 1/1 1 1 10m

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

mynginx-mychart-bf987cd5d-vp9qp 1/1 Running 0 10m

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

mynginx-mychart ClusterIP 10.96.34.246 <none> 80/TCP 10m

NOTES:

1. Get the application URL by running these commands:

export POD_NAME=$(kubectl get pods --namespace default -l "app.kubernetes.io/name=mychart,app.kubernetes.io/instance=mynginx" -o jsonpath="{.items[0].metadata.name}")

echo "Visit http://127.0.0.1:8080 to use your application"

kubectl port-forward $POD_NAME 8080:80#жөӢиҜ•и®ҝй—®nginxпјҡ

[root@master ~]# curl -I 10.96.34.246

HTTP/1.1 200 OK #nignxжҲҗеҠҹи®ҝй—®

Server: nginx/1.17.3

Date: Mon, 17 Feb 2020 07:45:39 GMT

Content-Type: text/html

Content-Length: 612

Last-Modified: Tue, 13 Aug 2019 08:50:00 GMT

Connection: keep-alive

ETag: "5d5279b8-264"

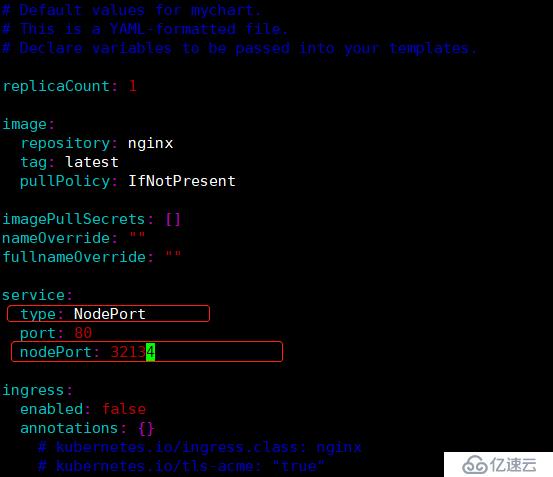

Accept-Ranges: bytes#дёҠйқўжҲ‘们дҪҝз”Ёзҡ„жҳҜClusterIPи®ҝй—®зҡ„nginxпјҢеҰӮжһңеӨ–йғЁеә”з”ЁйңҖиҰҒи®ҝй—®еҶ…йғЁжңҚеҠЎпјҢжҖҺд№ҲеҠһпјҹжүҖд»ҘжҲ‘们еҸҜд»Ҙд»ҘNodePortзҡ„ж–№ејҸе°ҶжңҚеҠЎз«ҜеҸЈжҳ е°„еҮәеҺ»гҖӮ

жіЁж„ҸпјҡжҲ‘们并дёҚиғҪеңЁvaluesж–Ү件дёӯзӣҙжҺҘж·»еҠ пјҢйңҖиҰҒе…ҲеңЁиҮӘе®ҡд№үchartзҡ„templatesзӣ®еҪ•дёӢзҡ„service.yamlж–Ү件иҝӣиЎҢж·»еҠ еҸҳйҮҸпјҢж“ҚдҪңеҰӮдёӢпјҡ

[root@master ~]# vim mychart/templates/service.yaml

service.yamlж–Ү件жҳҜд»ҘjsonиҜӯиЁҖзј–еҶҷзҡ„пјҢжүҖд»ҘжҲ‘们иҝӣиЎҢдҝ®ж”№ж—¶пјҢйңҖиҰҒжҢүз…§е…¶ж јејҸиҝӣиЎҢдҝ®ж”№гҖӮ

#еңЁserviceж–Ү件дёӯж·»еҠ дәҶnodeportзҡ„зұ»еһӢпјҢжҺҘдёӢжқҘдҝ®ж”№е…¶valuesж–Ү件пјҡ

[root@master ~]# vim mychart/values.yaml

#дҝ®ж”№е®ҢжҲҗеҗҺпјҢйҮҚж–°йғЁзҪІnginxпјҡ

[root@master ~]# helm delete mynginx --purge #е°ҶеҺҹжқҘзҡ„releaseеҲ йҷӨ

release "mynginx" deleted

[root@master ~]# helm install mychart/ -n mynginx #йҮҚж–°е®үиЈ…#жҹҘзңӢreleaseзҠ¶жҖҒпјҡ

[root@master ~]# helm status mynginx

LAST DEPLOYED: Mon Feb 17 16:02:04 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

mynginx-mychart 1/1 1 1 16s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

mynginx-mychart-bf987cd5d-xdm2d 1/1 Running 0 16s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

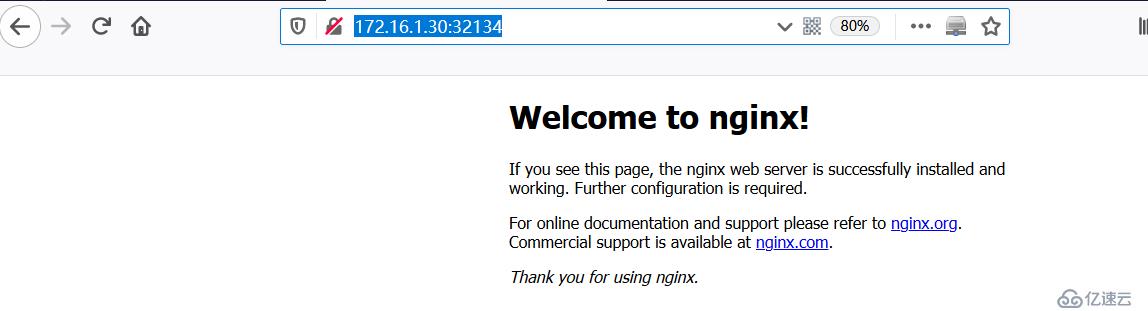

mynginx-mychart NodePort 10.100.31.89 <none> 80:32134/TCP 16s#еӨ–йғЁйҖҡиҝҮnodeportж–№ејҸи®ҝй—®nginxпјҡ

еҸӘиҰҒжҳҜзЁӢеәҸпјҢе°ұдјҡжңүbugпјҢchartд№ҹдёҚдҫӢеӨ–гҖӮHelmжҸҗдҫӣдәҶdebugзҡ„е·Ҙе…·пјҡhelm lintе’Ңhelm install --dry-run --debug гҖӮ

1пјүhelm lintе·Ҙе…·пјҡ

helm lint дјҡжЈҖжөӢchartзҡ„иҜӯжі•пјҢжҠҘе‘Ҡй”ҷиҜҜд»ҘеҸҠз»ҷеҮәе»әи®®гҖӮ

#жҜ”еҰӮжҲ‘们еңЁvalues.yamlж–Ү件дёӯжјҸжҺүдәҶдёҖдёӘеҶ’еҸ·вҖң:вҖқ ,йҖҡиҝҮ helm lint иҝӣиЎҢжөӢиҜ•пјҢе®ғдјҡжҢҮеҮәиҝҷдёӘиҜӯжі•й”ҷиҜҜгҖӮ

[root@master ~]# helm lint mychart/

==> Linting mychart/

[INFO] Chart.yaml: icon is recommended

[ERROR] values.yaml: unable to parse YAML

error converting YAML to JSON: yaml: line 8: could not find expected ':'

Error: 1 chart(s) linted, 1 chart(s) failedдёҖиҲ¬еңЁзј–еҶҷе®Ңvaluesж–Ү件еҗҺпјҢеҸҜд»Ҙе…ҲеҲ©з”Ёhelm lintе·Ҙе…·жЈҖжҹҘжҳҜеҗҰжңүbugгҖӮ

2пјүhelm install --dry-run --debugжөӢиҜ•пјҡ

helm install --dry-run --debug дјҡжЁЎжӢҹе®үиЈ…chartпјҢ并иҫ“еҮәжҜҸдёӘжЁЎжқҝз”ҹжҲҗзҡ„YAMLеҶ…е®№гҖӮ

[root@master ~]# helm install --dry-run mychart/ --debug

[debug] Created tunnel using local port: '43350'

[debug] SERVER: "127.0.0.1:43350"

[debug] Original chart version: ""

[debug] CHART PATH: /root/mychart

NAME: exacerbated-grizzly

REVISION: 1

RELEASED: Mon Feb 17 16:18:48 2020

CHART: mychart-0.1.0

USER-SUPPLIED VALUES:

{}

COMPUTED VALUES:

affinity: {}

fullnameOverride: ""

image:

pullPolicy: IfNotPresent

repository: nginx

tag: latest

imagePullSecrets: []

ingress:

annotations: {}

enabled: false

hosts:

- host: chart-example.local

paths: []

tls: []

nameOverride: ""

nodeSelector: {}

replicaCount: 1

resources: {}

service:

nodePort: 32134

port: 80

type: NodePort

tolerations: []

HOOKS:

---

# exacerbated-grizzly-mychart-test-connection

apiVersion: v1

kind: Pod

metadata:

name: "exacerbated-grizzly-mychart-test-connection"

labels:

app.kubernetes.io/name: mychart

helm.sh/chart: mychart-0.1.0

app.kubernetes.io/instance: exacerbated-grizzly

app.kubernetes.io/version: "1.0"

app.kubernetes.io/managed-by: Tiller

annotations:

"helm.sh/hook": test-success

spec:

containers:

- name: wget

image: busybox

command: ['wget']

args: ['exacerbated-grizzly-mychart:80']

restartPolicy: Never

MANIFEST:

---

# Source: mychart/templates/service.yaml

apiVersion: v1

kind: Service

metadata:

name: exacerbated-grizzly-mychart

labels:

app.kubernetes.io/name: mychart

helm.sh/chart: mychart-0.1.0

app.kubernetes.io/instance: exacerbated-grizzly

app.kubernetes.io/version: "1.0"

app.kubernetes.io/managed-by: Tiller

spec:

type: NodePort

ports:

- port: 80

targetPort: http

nodePort: 32134

protocol: TCP

name: http

selector:

app.kubernetes.io/name: mychart

app.kubernetes.io/instance: exacerbated-grizzly

---

# Source: mychart/templates/deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: exacerbated-grizzly-mychart

labels:

app.kubernetes.io/name: mychart

helm.sh/chart: mychart-0.1.0

app.kubernetes.io/instance: exacerbated-grizzly

app.kubernetes.io/version: "1.0"

app.kubernetes.io/managed-by: Tiller

spec:

replicas: 1

selector:

matchLabels:

app.kubernetes.io/name: mychart

app.kubernetes.io/instance: exacerbated-grizzly

template:

metadata:

labels:

app.kubernetes.io/name: mychart

app.kubernetes.io/instance: exacerbated-grizzly

spec:

containers:

- name: mychart

image: "nginx:latest"

imagePullPolicy: IfNotPresent

ports:

- name: http

containerPort: 80

protocol: TCP

livenessProbe:

httpGet:

path: /

port: http

readinessProbe:

httpGet:

path: /

port: http

resources:

{}жҲ‘们еҸҜд»ҘжЈҖи§Ҷиҝҷдәӣиҫ“еҮә, еҲӨж–ӯжҳҜеҗҰдёҺйў„жңҹзӣёз¬ҰгҖӮ

chartйҖҡиҝҮжөӢиҜ•еҗҺеҸҜд»Ҙе°Ҷе…¶ж·»еҠ еҲ°д»“еә“пјҢеӣўйҳҹе…¶д»–жҲҗе‘ҳе°ұиғҪеӨҹж–№дҫҝдҪҝз”ЁгҖӮд»»дҪ•HTTP ServerеәҰеҸҜд»ҘдҪңдёәchartд»“еә“пјҢдёӢйқўе°ҶеңЁйӣҶзҫӨдёӯnode01иҠӮзӮ№иҠӮзӮ№дёҠжҗӯе»әд»“еә“гҖӮ

1пјүеңЁnode01дёҠиҝҗиЎҢдёҖдёӘhttpdе®№еҷЁпјҡпјҲжҸҗдҫӣwebжңҚеҠЎпјү

[root@node01 ~]# docker run -d -p 8080:80 -v /var/www/:/usr/local/apache2/htdocs httpd

a2fb5f89dd3fd3f729139e41a105498a60d0bee02c73ad8706636007390eaa552пјүеӣһеҲ°masterпјҢйҖҡиҝҮhelm package е°Ҷmychartжү“еҢ…пјҡ

[root@master ~]# helm package mychart/

Successfully packaged chart and saved it to: /root/mychart-0.1.0.tgz3пјүжү§иЎҢhelm repo index з”ҹжҲҗд»“еә“зҡ„indexж–Ү件пјҡ

[root@master ~]# mkdir myrepo

[root@master ~]# mv mychart-0.1.0.tgz myrepo/

[root@master ~]# helm repo index myrepo/ --url http://172.16.1.31:8080/charts #иҜҘең°еқҖдёәchartд»“еә“ең°еқҖпјҲnode01пјү

[root@master ~]# ls myrepo/

index.yaml mychart-0.1.0.tgzhelmдјҡжү«жҸҸ myrepoзӣ®еҪ•дёӯзҡ„жүҖжңүtgzеҢ…пјҢ并з”ҹжҲҗindex.yamlж–Ү件гҖӮ--urlжҢҮе®ҡзҡ„жҳҜж–°chartд»“еә“зҡ„и®ҝй—®и·Ҝеҫ„гҖӮж–°з”ҹжҲҗзҡ„index.yaml и®°еҪ•дәҶеҪ“еүҚд»“еә“дёӯжүҖжңү chart зҡ„дҝЎжҒҜпјҡ

[root@master ~]# cat myrepo/index.yaml

apiVersion: v1

entries:

mychart:

- apiVersion: v1

appVersion: "1.0"

created: "2020-02-17T16:34:25.239190623+08:00"

description: A Helm chart for Kubernetes

digest: 367436d83e973f89e4bac162837fb4e9579cf3176b2506a7ed6617a182f11031

name: mychart

urls:

- http://172.16.1.31:8080/charts/mychart-0.1.0.tgz

version: 0.1.0

generated: "2020-02-17T16:34:25.238618624+08:00"

#еҸҜд»ҘзңӢеҲ°еҪ“еүҚеҸӘжңүmychartиҝҷдёҖдёӘchartгҖӮ4пјүе°Ҷ mychart-0.1.0.tgz е’Ң index.yaml дёҠдј еҲ°node1 зҡ„ /var/www/charts зӣ®еҪ•гҖӮ

#еңЁnode01дёҠеҲӣе»әзӣ®еҪ•пјҡ

[root@node01 ~]# mkdir /var/www/charts

#е°Ҷж–Ү件жӢ·иҙқз»ҷnode01пјҡ

[root@master ~]# scp myrepo/index.yaml myrepo/mychart-0.1.0.tgz node01:/var/www/charts

index.yaml 100% 400 0.4KB/s 00:00

mychart-0.1.0.tgz 100% 2842 2.8KB/s 00:00 5пјүйҖҡиҝҮhelm repo add е°Ҷж–°д»“еә“ж·»еҠ еҲ°Helmпјҡ

[root@master ~]# helm repo add myrepo http://172.16.1.31:8080/charts

"myrepo" has been added to your repositories

[root@master ~]# helm repo list

NAME URL

stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts

local http://127.0.0.1:8879/charts

myrepo http://172.16.1.31:8080/charts

д»“еә“е‘ҪеҗҚдёәmyrepoпјҢHelmдјҡд»Һд»“еә“дёӢиҪҪindex.yamlгҖӮ#зҺ°еңЁз”ЁжҲ·е°ұеҸҜд»Ҙrepo search еҲ°mychartдәҶпјҡ

[root@master ~]# helm search mychart

NAME CHART VERSION APP VERSION DESCRIPTION

local/mychart 0.1.0 1.0 A Helm chart for Kubernetes

myrepo/mychart 0.1.0 1.0 A Helm chart for KubernetesйҷӨдәҶиҮӘе·ұдёҠдј зҡ„д»“еә“пјҢиҝҷиҝҳжңүдёҖдёӘlocal/mychartгҖӮиҝҷжҳҜеӣ дёәеңЁжү§иЎҢ第 2 жӯҘжү“еҢ…ж“ҚдҪңзҡ„еҗҢж—¶пјҢmychart д№ҹиў«еҗҢжӯҘеҲ°дәҶ local зҡ„д»“еә“гҖӮ

#д»Һж–°д»“еә“дёӯе®үиЈ…mychartпјҡ

[root@master ~]# helm install myrepo/mychart -n new-nginx#жҹҘзңӢreleaseзҡ„зҠ¶жҖҒпјҡ

[root@master ~]# helm status new-nginx #podжӯЈеёёиҝҗиЎҢ

LAST DEPLOYED: Mon Feb 17 16:56:54 2020

NAMESPACE: default

STATUS: DEPLOYED

RESOURCES:

==> v1/Deployment

NAME READY UP-TO-DATE AVAILABLE AGE

new-nginx-mychart 1/1 1 1 55s

==> v1/Pod(related)

NAME READY STATUS RESTARTS AGE

new-nginx-mychart-66d6bbb795-fsgml 1/1 Running 0 55s

==> v1/Service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

new-nginx-mychart NodePort 10.106.51.8 <none> 80:32134/TCP 55s

NOTES:

1. Get the application URL by running these commands:

export NODE_PORT=$(kubectl get --namespace default -o jsonpath="{.spec.ports[0].nodePort}" services new-nginx-mychart)

export NODE_IP=$(kubectl get nodes --namespace default -o jsonpath="{.items[0].status.addresses[0].address}")

echo http://$NODE_IP:$NODE_PORTеҰӮжһңд»ҘеҗҺд»“еә“ж·»еҠ дәҶж–°зҡ„chartпјҢйңҖиҰҒз”Ёhelm repo updateе‘Ҫд»Өжӣҙж–°жң¬ең°зҡ„indexгҖӮ

[root@master ~]# helm repo update

Hang tight while we grab the latest from your chart repositories...

...Skip local chart repository

...Successfully got an update from the "myrepo" chart repository

...Successfully got an update from the "stable" chart repository

Update Complete.е…ҚиҙЈеЈ°жҳҺпјҡжң¬з«ҷеҸ‘еёғзҡ„еҶ…е®№пјҲеӣҫзүҮгҖҒи§Ҷйў‘е’Ңж–Үеӯ—пјүд»ҘеҺҹеҲӣгҖҒиҪ¬иҪҪе’ҢеҲҶдә«дёәдё»пјҢж–Үз« и§ӮзӮ№дёҚд»ЈиЎЁжң¬зҪ‘з«ҷз«ӢеңәпјҢеҰӮжһңж¶үеҸҠдҫөжқғиҜ·иҒ”зі»з«ҷй•ҝйӮ®з®ұпјҡis@yisu.comиҝӣиЎҢдёҫжҠҘпјҢ并жҸҗдҫӣзӣёе…іиҜҒжҚ®пјҢдёҖз»ҸжҹҘе®һпјҢе°Ҷз«ӢеҲ»еҲ йҷӨж¶үе«ҢдҫөжқғеҶ…е®№гҖӮ

жӮЁеҘҪпјҢзҷ»еҪ•еҗҺжүҚиғҪдёӢи®ўеҚ•е“ҰпјҒ