жӮЁеҘҪпјҢзҷ»еҪ•еҗҺжүҚиғҪдёӢи®ўеҚ•е“ҰпјҒ

е°Ҹзј–з»ҷеӨ§е®¶еҲҶдә«дёҖдёӢеҰӮдҪ•д»ҺжҢҮе®ҡзҡ„зҪ‘з»ңз«ҜеҸЈдёҠйҮҮйӣҶж—Ҙеҝ—еҲ°жҺ§еҲ¶еҸ°иҫ“еҮәе’ҢHDFSпјҢеёҢжңӣеӨ§е®¶йҳ…иҜ»е®ҢиҝҷзҜҮж–Үз« д№ӢеҗҺйғҪжңүжүҖ收иҺ·пјҢдёӢйқўи®©жҲ‘们дёҖиө·еҺ»жҺўи®Ёеҗ§пјҒ

йңҖжұӮ1пјҡ

д»ҺжҢҮе®ҡзҡ„зҪ‘з»ңз«ҜеҸЈдёҠйҮҮйӣҶж—Ҙеҝ—еҲ°жҺ§еҲ¶еҸ°иҫ“еҮәе’ҢHDFS

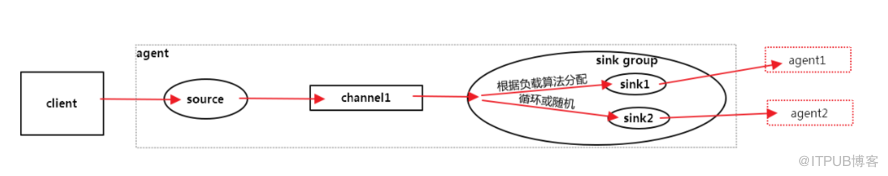

иҙҹиҪҪз®—жі•

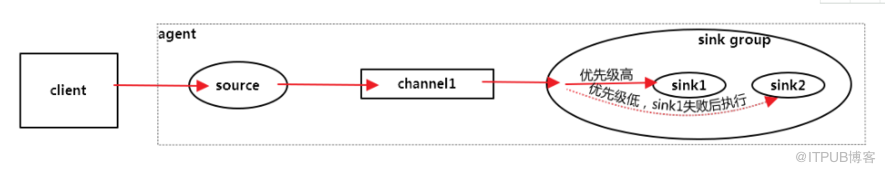

ж•…йҡңиҪ¬з§»пјҡеҸҜд»ҘжҢҮе®ҡдјҳе…Ҳзә§пјҢж•°еӯ—и¶ҠеӨ§и¶Ҡдјҳе…Ҳ

a1.sinkgroups.g1.processor.type = failover

a1.sinkgroups = g1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinkgroups.g1.processor.type = failover a1.sinkgroups.g1.processor.priority.k1 = 5 a1.sinkgroups.g1.processor.priority.k2 = 10 a1.sinkgroups.g1.processor.maxpenalty = 10000

е…ЁйғЁиҪ®иҜў

a1.sinkgroups.g1.processor.type = load_balance

a1.sinkgroups.g1.processor.type = load_balance

#д»ҺжҢҮе®ҡзҡ„зҪ‘з»ңз«ҜеҸЈдёҠйҮҮйӣҶж—Ҙеҝ—еҲ°жҺ§еҲ¶еҸ°иҫ“еҮәе’ҢHDFS

a1.sources = r1 a1.sinks = k1 k2 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = 0.0.0.0 a1.sources.r1.port = 44444 # Describe the sink a1.sinkgroups = g1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinkgroups.g1.processor.type = load_balance a1.sinks.k1.type = logger a1.sinks.k2.type = hdfs a1.sinks.k2.hdfs.path = hdfs://192.168.0.129:9000/user/hadoop/flume a1.sinks.k2.hdfs.batchSize = 10 a1.sinks.k2.hdfs.fileType = DataStream a1.sinks.k2.hdfs.writeFormat = Text # Use a channel which buffers events in memory a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c1

жЈҖжҹҘloggerиҫ“еҮәпјҡ

2018-08-10 18:58:39,659 (lifecycleSupervisor-1-3) [INFO - org.apache.flume.source.NetcatSource.start(NetcatSource.java:169)] Created serverSocket:sun.nio.ch.ServerSocketChannelImpl[/0:0:0:0:0:0:0:0:44444]

2018-08-10 18:59:17,723 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:94)] Event: { headers:{} body: 7A 6F 75 72 63 20 6F 6B 0D zourc ok. }

2018-08-10 19:00:35,744 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.LoggerSink.process(LoggerSink.java:94)] Event: { headers:{} body: 61 73 64 66 0D asdf. }

2018-08-10 19:00:35,774 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.hdfs.HDFSDataStream.configure(HDFSDataStream.java:58)] Serializer = TEXT, UseRawLocalFileSystem = false

2018-08-10 19:00:36,086 (SinkRunner-PollingRunner-LoadBalancingSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.open(BucketWriter.java:234)] Creating hdfs://192.168.0.129:9000/user/hadoop/flume/FlumeData.1533942035775.tmpжЈҖжҹҘhdfsиҫ“еҮәпјҡ

[hadoop@hadoop001 flume]$ hdfs dfs -text hdfs://192.168.0.129:9000/user/hadoop/flume/* 18/08/10 19:14:23 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable zourc1 2 3 4 5 6 7 8 9 10

зңӢе®ҢдәҶиҝҷзҜҮж–Үз« пјҢзӣёдҝЎдҪ еҜ№вҖңеҰӮдҪ•д»ҺжҢҮе®ҡзҡ„зҪ‘з»ңз«ҜеҸЈдёҠйҮҮйӣҶж—Ҙеҝ—еҲ°жҺ§еҲ¶еҸ°иҫ“еҮәе’ҢHDFSвҖқжңүдәҶдёҖе®ҡзҡ„дәҶи§ЈпјҢеҰӮжһңжғідәҶи§ЈжӣҙеӨҡзӣёе…ізҹҘиҜҶпјҢж¬ўиҝҺе…іжіЁдәҝйҖҹдә‘иЎҢдёҡиө„и®Ҝйў‘йҒ“пјҢж„ҹи°ўеҗ„дҪҚзҡ„йҳ…иҜ»пјҒ

е…ҚиҙЈеЈ°жҳҺпјҡжң¬з«ҷеҸ‘еёғзҡ„еҶ…е®№пјҲеӣҫзүҮгҖҒи§Ҷйў‘е’Ңж–Үеӯ—пјүд»ҘеҺҹеҲӣгҖҒиҪ¬иҪҪе’ҢеҲҶдә«дёәдё»пјҢж–Үз« и§ӮзӮ№дёҚд»ЈиЎЁжң¬зҪ‘з«ҷз«ӢеңәпјҢеҰӮжһңж¶үеҸҠдҫөжқғиҜ·иҒ”зі»з«ҷй•ҝйӮ®з®ұпјҡis@yisu.comиҝӣиЎҢдёҫжҠҘпјҢ并жҸҗдҫӣзӣёе…іиҜҒжҚ®пјҢдёҖз»ҸжҹҘе®һпјҢе°Ҷз«ӢеҲ»еҲ йҷӨж¶үе«ҢдҫөжқғеҶ…е®№гҖӮ

жӮЁеҘҪпјҢзҷ»еҪ•еҗҺжүҚиғҪдёӢи®ўеҚ•е“ҰпјҒ