您好,登录后才能下订单哦!

不知道大家之前对类似MySQL 5.6中如何通过Keepalived+互为主从实现高可用架构的文章有无了解,今天我在这里给大家再简单的讲讲。感兴趣的话就一起来看看正文部分吧,相信看完MySQL 5.6中如何通过Keepalived+互为主从实现高可用架构你一定会有所收获的。

一、测试环境

操作系统版本:Red Hat Enterprise Linux Server release 6.5 (Santiago)

Mysql版本:MySQL-5.6.38-1.el6.x86_64.rpm-bundle.tar

keepalived版本:keepalived-1.2.7-3.el6.x86_64.rpm

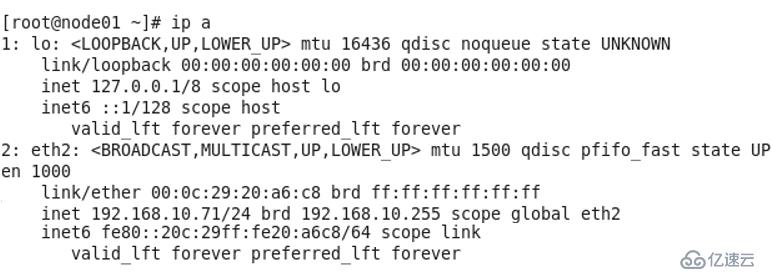

node01:192.168.10.71

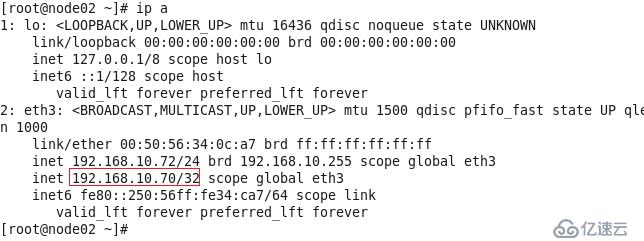

node02:192.168.10.72

VIP:192.168.10.70

二、配置node01为主、node02为从的主从同步

1、node01和node02分别安装好Mysql 5.6.38,安装方法请参考上一篇博文《MySQL 5.6.38在RedHat 6.5上通过RPM包安装》。

2、在node01和node02分别编辑/etc/my.cnf,配置如下:

[mysqld]

log-bin=mysql-bin

server-id = 1

[mysqld_safe]

log-error = /var/log/mysqld.log

pid-file = /var/run/mysqld/mysqld.pid

replicate-do-db = all

3、重启mysql服务

[root@node01 ~]# service mysql restart

[root@node02 ~]# service mysql restart

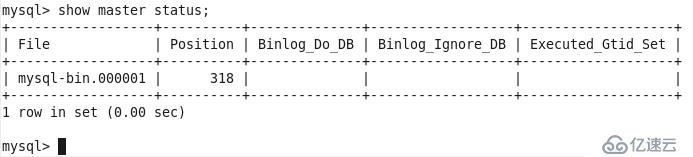

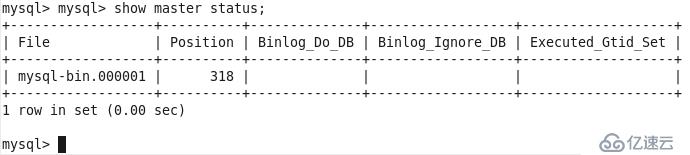

4、登录node01的mysql,创建用于同步的账户repl,密码为123456,并查询master状态,记下file名称和posttion数值

mysql> GRANT REPLICATION SLAVE ON *.* to 'repl'@'%' identified by '123456';

Query OK, 0 rows affected (0.00 sec)

mysql> show master status;

5、登录node02的mysql,执行以下语句开启从云服务器,注意master_host要填写node01的IP

mysql> change master to master_host='192.168.10.71',master_user='repl',master_password='123456',master_log_file='mysql-bin.000001',master_log_pos=318;

Query OK, 0 rows affected, 2 warnings (0.36 sec)

命令参数解释: master_host='192.168.10.71' ## Master 的 IP 地址 master_user='repl' ## 用于同步数据的用户(在 Master中授权的用户) master_password='123456' ## 同步数据用户的密码 master_log_file='mysql-bin.000001' ##指定 Slave 从哪个日志文 件开始读复制数据(可在 Master 上使用 show master status 查看到日志文件名) master_log_pos=429 ## 从哪个 POSITION 号开始读

mysql> start slave;

Query OK, 0 rows affected (0.03 sec)

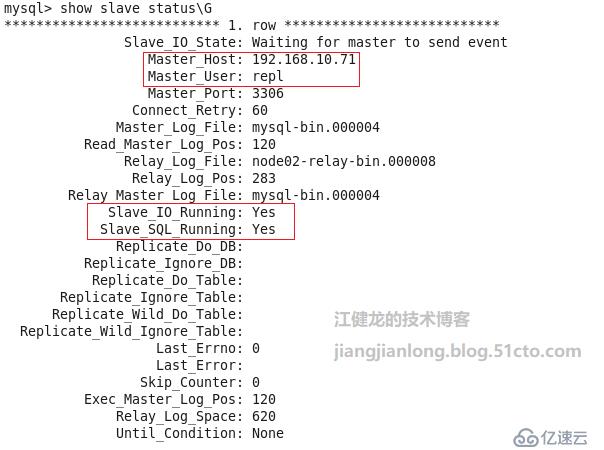

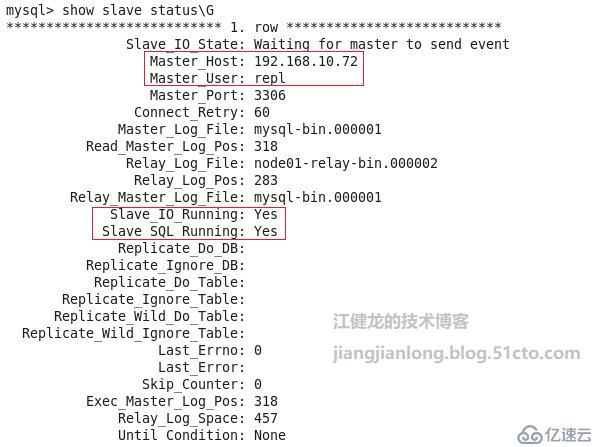

6、查询从服务的状态,状态正常

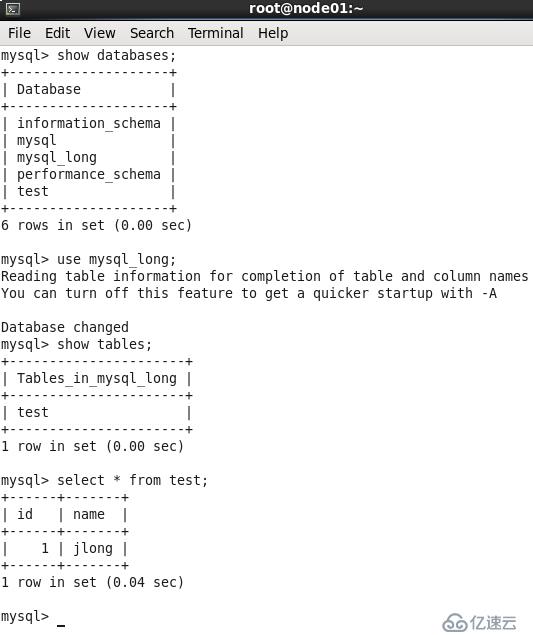

7、在node01创建一个数据库、一个表并插入一行数据,用于测试node02是否能同步过去

mysql> create database mysql_long;

Query OK, 1 row affected (0.00 sec)

mysql> use mysql_long;

Database changed

mysql> create table test(id int(3),name char(5));

Query OK, 0 rows affected (0.13 sec)

mysql> insert into test values (001,'jlong');

Query OK, 1 row affected (0.01 sec)

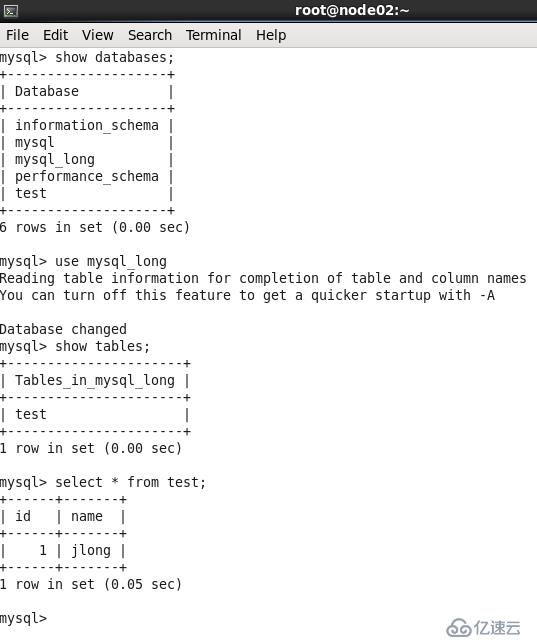

8、登录到node02的Mysql,同步正常

三、配置node02为主、node01为从的主从同步

1、登录node02的mysql,创建用于同步的账户repl,密码为123456,并查询master状态,记下file名称和posttion数值,并查询master状态

mysql> GRANT REPLICATION SLAVE ON *.* to 'repl'@'%' identified by '123456';

Query OK, 0 rows affected (0.00 sec)

Query OK, 0 rows affected (0.10 sec)

2、登录node01的mysql,执行以下语句开启从云服务器,注意这里master_host要填写node02的IP

mysql> change master to master_host='192.168.10.72',master_user='repl',master_password='123456',master_log_file='mysql-bin.000001',master_log_pos=318;

Query OK, 0 rows affected, 2 warnings (0.36 sec)

mysql> start slave;

Query OK, 0 rows affected (0.03 sec)

3、查询从服务的状态,状态正常

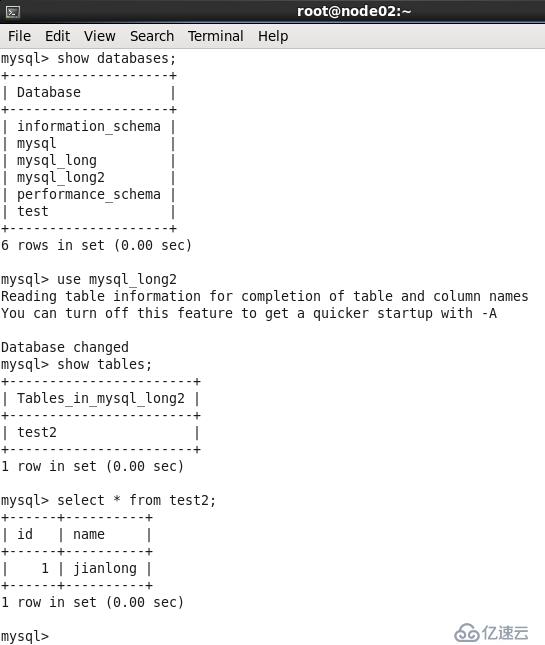

4、在node02创建一个数据库、一个表并插入一行数据,用于测试node01是否能同步过去

mysql> create database mysql_long2;

Query OK, 1 row affected (0.00 sec)

mysql> use mysql_long2;

Database changed

mysql> create table test2(id int(3),name char(10));

Query OK, 0 rows affected (0.71 sec)

mysql> insert into test2 values (001,'jianlong');

Query OK, 1 row affected (0.00 sec

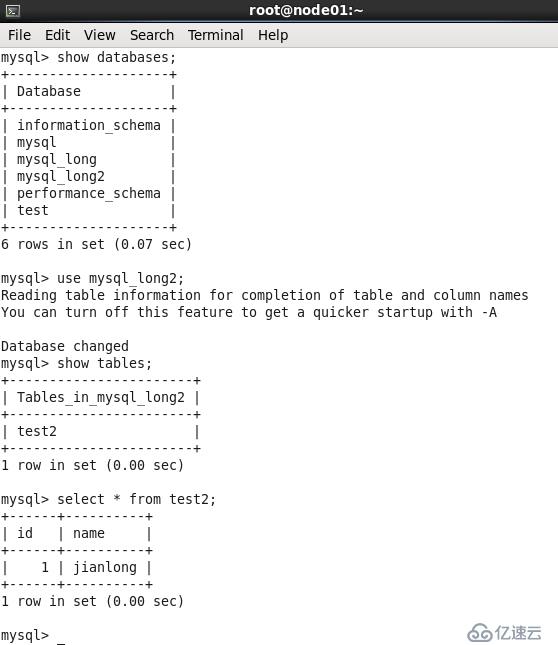

5、node01同步正常。

这样互为主从就配置好了,两台机既是对方的Master,又是对方的Slave,无论在哪一台机上数据发生了变化,另一台都能及时进行同步数据,下面我们开始配置keepalived实现高可用。

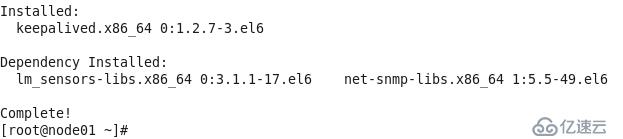

四、keepalived配置

1、在node01上使用yum安装keepalived

[root@node01 ~]# yum install keepalived -y

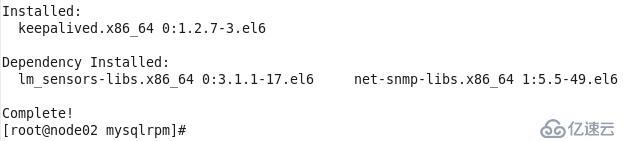

2、在node02上也使用yum安装keepalived

[root@node02 ~]# yum install keepalived -y

3、编辑node01的keepalived的配置文件

[root@node01 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from

Alexandre.Cassen@firewall.loc

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state BACKUP ##node01和node02都配置成BACKUP,角色由优先级确定

interface eth3

virtual_router_id 71

priority 100 ##node01的优先级设置比node02的高

advert_int 1

nopreempt ##设置不抢占(需在BACKUP状态下设置才有效)

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.10.70

}

}

virtual_server

192.168.10.70 3306 {

delay_loop 6

lb_algo wrr

lb_kind DR

nat_mask 255.255.255.0

persistence_timeout 50

protocol TCP

real_server 192.168.10.71 3306 {

weight 100

notify_down /etc/keepalived/stopkeepalived.sh #3306端口不可用则执行脚本

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 3306

}

}

}4、编辑node02的keepalived的配置文件

[root@node02 ~]# cat /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

notification_email_from

Alexandre.Cassen@firewall.loc

smtp_server 192.168.200.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_instance VI_1 {

state BACKUP ##node01和node02都配置成BACKUP,角色由优先级确定

interface eth4

virtual_router_id 71

priority 90 ##node02的优先级设置比node01的低

advert_int 1

nopreempt ##设置不抢占(需在BACKUP状态下设置才有效)

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress {

192.168.10.70

}

}

virtual_server

192.168.10.70 3306 {

delay_loop 6

lb_algo wrr

lb_kind DR

nat_mask 255.255.255.0

persistence_timeout 50

protocol TCP

real_server 192.168.10.72 3306 {

weight 100

notify_down /etc/keepalived/stopkeepalived.sh #3306端口不可用则执行脚本

TCP_CHECK {

connect_timeout 10

nb_get_retry 3

delay_before_retry 3

connect_port 3306

}

}

}

[root@node02 ~]#5、node01和node02都编辑一个keepalived的自杀脚本/etc/keepalived/stopalived.sh,一旦检测到Mysql的3306端口不通,便执行此脚本触发vip的切换,脚本内容很简单,就是service keepalived stop就行,因为keepalived服务停止便会触发高可用的切换动作。

6、启动keepalived服务

[root@node01 ~]# service keepalived start

Starting keepalived: [ OK ]

[root@node02 ~]# service keepalived start

Starting keepalived: [ OK ]

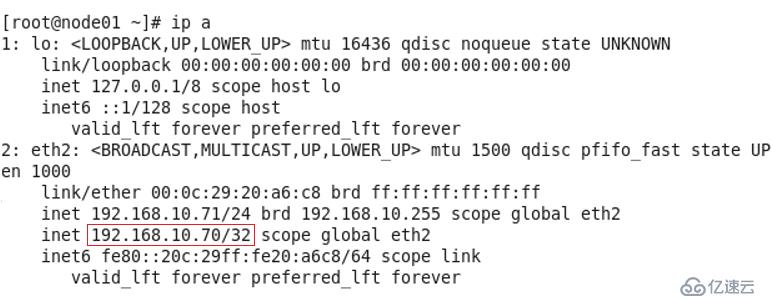

7、观察node01的message日志,由于node01的优先级高,因此进入了master角色,vip也已经加上了

[root@node01~]# tail -f /var/log/messages Oct 30 22:47:46 node01 Keepalived[3170]: Starting Keepalived v1.2.7 (09/26,2012) Oct 30 22:47:46 node01 Keepalived[3171]: Starting Healthcheck child process, pid=3173 Oct 30 22:47:46 node01 Keepalived[3171]: Starting VRRP child process, pid=3174 Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Interface queue is empty Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Netlink reflector reports IP 192.168.10.71 added Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Netlink reflector reports IP fe80::20c:29ff:fe20:a6c8 added Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Registering Kernel netlink reflector Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Registering Kernel netlink command channel Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Registering gratuitous ARP shared channel Oct 30 22:47:46 node01 kernel: IPVS: Registered protocols (TCP, UDP, SCTP, AH, ESP) Oct 30 22:47:46 node01 kernel: IPVS: Connection hash table configured (size=4096,memory=64Kbytes) Oct 30 22:47:46 node01 kernel: IPVS: ipvs loaded. Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Opening file '/etc/keepalived/keepalived.conf'. Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Configuration is using : 63319 Bytes Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: Using LinkWatch kernel netlink reflector... Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Interface queue is empty Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Netlink reflector reports IP 192.168.10.71 added Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Netlink reflector reports IP fe80::20c:29ff:fe20:a6c8 added Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Registering Kernel netlink reflector Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Registering Kernel netlink command channel Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Opening file '/etc/keepalived/keepalived.conf' Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Configuration is using : 11970 Bytes Oct 30 22:47:46 node01 Keepalived_vrrp[3174]: VRRP sockpool: [ifindex(2), proto(112), fd(11,12)] Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Using LinkWatch kernel netlink reflector... Oct 30 22:47:46 node01 Keepalived_healthcheckers[3173]: Activating healthchecker for service [192.168.10.71]:3306 Oct 30 22:47:46 node01 kernel: IPVS: [wrr] scheduler registered. Oct 30 22:47:47 node01 Keepalived_vrrp[3174]: VRRP_Instance(VI_1) Transition to MASTER STATE Oct 30 22:47:48 node01 Keepalived_vrrp[3174]: VRRP_Instance(VI_1) Entering MASTER STATE Oct 30 22:47:48 node01 Keepalived_vrrp[3174]: VRRP_Instance(VI_1) setting protocol VIPs. Oct 30 22:47:48 node01 Keepalived_vrrp[3174]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth3 for 192.168.10.70 Oct 30 22:47:48 node01 Keepalived_healthcheckers[3173]: Netlink reflector reports IP 192.168.10.70 added

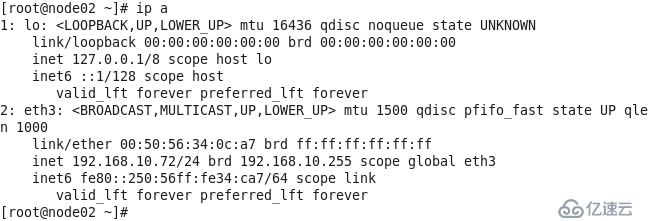

8、观察node02的message日志,node02进入了BACKUP角色,VIP自然不会加上。

[root@node02 ~]# tail -f /var/log/messages Oct 30 22:48:54 node02 Keepalived[16633]: Starting Keepalived v1.2.7 (09/26,2012) Oct 30 22:48:54 node02 Keepalived[16634]: Starting Healthcheck child process, pid=16636 Oct 30 22:48:54 node02 Keepalived[16634]: Starting VRRP child process, pid=16637 Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Interface queue is empty Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Interface queue is empty Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Netlink reflector reports IP 192.168.10.72 added Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Netlink reflector reports IP fe80::250:56ff:fe34:ca7 added Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Registering Kernel netlink reflector Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Registering Kernel netlink command channel Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Registering gratuitous ARP shared channel Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Opening file '/etc/keepalived/keepalived.conf'. Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Netlink reflector reports IP 192.168.10.72 added Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Netlink reflector reports IP fe80::250:56ff:fe34:ca7 added Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Registering Kernel netlink reflector Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Registering Kernel netlink command channel Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Opening file'/etc/keepalived/keepalived.conf'. Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Configuration is using : 11988 Bytes Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Configuration is using : 63337 Bytes Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: Using LinkWatch kernel netlink reflector... Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: VRRP_Instance(VI_1) Entering BACKUP STATE Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Using LinkWatch kernel netlink reflector... Oct 30 22:48:54 node02 Keepalived_vrrp[16637]: VRRP sockpool: [ifindex(2), proto(112),fd(10,11)] Oct 30 22:48:54 node02 Keepalived_healthcheckers[16636]: Activating healthchecker for service [192.168.10.72]:3306

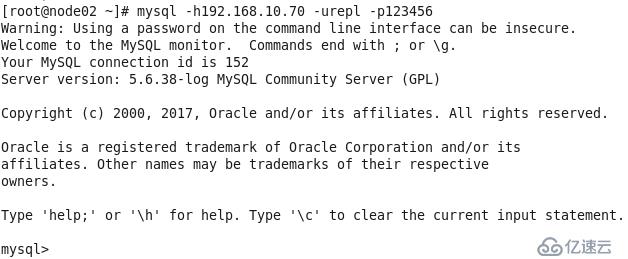

9、测试vip是否可能用来登录mysql,由于此时vip在node01上,我们就在node02上使用vip来测试登录,可见是没有问题的

五、Mysql高可用测试

1、停止node01的mysql服务,3306端口自然不通,keepalived检测到3306端口不通后执行自杀脚本停止自身服务,VIP被移除释放出来

[root@node01 ~]# service mysql stop Shutting down MySQL.. [ OK ]

2、观察node01的messages日志可以明显看出整个过程,VIP也已经不见了

[root@node01 ~]# tail -f /var/log/messages Oct 30 23:06:42 node01 Keepalived_healthcheckers[3173]: TCP connection to [192.168.10.71]:3306 failed !!! Oct 30 23:06:42 node01 Keepalived_healthcheckers[3173]: Removing service [192.168.10.71]:3306 from VS [192.168.10.70]:3306 Oct 30 23:06:42 node01 Keepalived_healthcheckers[3173]: Executing [/etc/keepalived/stopkeepalived.sh] for service [192.168.10.71]:3306 in VS [192.168.10.70]:3306 Oct 30 23:06:42 node01 Keepalived_healthcheckers[3173]: Lost quorum 1-0=1 > 0 for VS [192.168.10.70]:3306 Oct 30 23:06:42 node01 Keepalived_healthcheckers[3173]: SMTP connection ERROR to [192.168.200.1]:25. Oct 30 23:06:42 node01 kernel: IPVS: __ip_vs_del_service: enter Oct 30 23:06:42 node01 Keepalived[3171]: Stopping Keepalived v1.2.7 (09/26,2012) Oct 30 23:06:42 node01 Keepalived_vrrp[3174]: VRRP_Instance(VI_1) sending 0 priority Oct 30 23:06:42 node01 Keepalived_vrrp[3174]: VRRP_Instance(VI_1) removing protocol VIPs.

3、观察node02的messages日志,可以看到node02进入了MASTER角色,接管了VIP,VIP已经加上,从日志的时间看,切换的过程不过花了1秒,可说是秒级切换了。

[root@node02 ~]# tail -f /var/log/messages Oct 30 23:06:42 node02 Keepalived_vrrp[16637]: VRRP_Instance(VI_1) Transition to MASTER STATE Oct 30 23:06:43 node02 Keepalived_vrrp[16637]: VRRP_Instance(VI_1) Entering MASTER STATE Oct 30 23:06:43 node02 Keepalived_vrrp[16637]: VRRP_Instance(VI_1) setting protocol VIPs. Oct 30 23:06:43 node02 Keepalived_vrrp[16637]: VRRP_Instance(VI_1) Sending gratuitous ARPs on eth4 for 192.168.10.70 Oct 30 23:06:43 node02 Keepalived_healthcheckers[16636]: Netlink reflector reports IP 192.168.10.70 added

由于node01的keepalived进程被自杀脚本停止了,因此需要手动启动。之前我想是否需要跑一个监控脚本把keepalived服务自动开起来呢,后来我觉得不必要,因为如果mysql的服务依然异常,就算keepalived的服务起来了,它检测到本机的3306端口不通,还是会再次自杀。而既然mysql服务已经异常、端口都不通了,一般也是需要手动检查干预把mysql启动起来的,因此在mysql服务正常后再顺便手动起一下keepalived就好了。

看完MySQL 5.6中如何通过Keepalived+互为主从实现高可用架构这篇文章,大家觉得怎么样?如果想要了解更多相关,可以继续关注我们的行业资讯板块。

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。