您好,登录后才能下订单哦!

一、爬虫时,出现urllib.error.HTTPError: HTTP Error 403: Forbidden

Traceback (most recent call last): File "D:/访问web.py", line 75, in <module> downHtml(url=url) File "D:/urllib访问web.py", line 44, in downHtml html=request.urlretrieve(url=url,filename='%s/%s.txt'%(savedir,get_domain_url(url=url))) File "C:\Python35\lib\urllib\request.py", line 187, in urlretrieve with contextlib.closing(urlopen(url, data)) as fp: File "C:\Python35\lib\urllib\request.py", line 162, in urlopen return opener.open(url, data, timeout) File "C:\Python35\lib\urllib\request.py", line 471, in open response = meth(req, response) File "C:\Python35\lib\urllib\request.py", line 581, in http_response 'http', request, response, code, msg, hdrs) File "C:\Python35\lib\urllib\request.py", line 503, in error result = self._call_chain(*args) File "C:\Python35\lib\urllib\request.py", line 443, in _call_chain result = func(*args) File "C:\Python35\lib\urllib\request.py", line 686, in http_error_302 return self.parent.open(new, timeout=req.timeout) File "C:\Python35\lib\urllib\request.py", line 471, in open response = meth(req, response) File "C:\Python35\lib\urllib\request.py", line 581, in http_response 'http', request, response, code, msg, hdrs) File "C:\Python35\lib\urllib\request.py", line 509, in error return self._call_chain(*args) File "C:\Python35\lib\urllib\request.py", line 443, in _call_chain result = func(*args) File "C:\Python35\lib\urllib\request.py", line 589, in http_error_default raise HTTPError(req.full_url, code, msg, hdrs, fp) urllib.error.HTTPError: HTTP Error 403: Forbidden

二、分析:

之所以出现上面的异常,是因为如果用 urllib.request.urlopen 方式打开一个URL,服务器端只会收到一个单纯的对于该页面访问的请求,但是服务器并不知道发送这个请求使用的浏览器,操作系统,硬件平台等信息,而缺失这些信息的请求往往都是非正常的访问,例如爬虫.有些网站为了防止这种非正常的访问,会验证请求信息中的UserAgent(它的信息包括硬件平台、系统软件、应用软件和用户个人偏好),如果UserAgent存在异常或者是不存在,那么这次请求将会被拒绝(如上错误信息所示)所以可以尝试在请求中加入UserAgent的信息

三、方案:

对于Python 3.x来说,在请求中添加UserAgent的信息非常简单,代码如下

#如果不加上下面的这行出现会出现urllib.error.HTTPError: HTTP Error 403: Forbidden错误

#主要是由于该网站禁止爬虫导致的,可以在请求加上头信息,伪装成浏览器访问User-Agent,具体的信息可以通过火狐的FireBug插件查询

headers = {'User-Agent':'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.75 Safari/537.36'}

req = urllib.request.Request(url=chaper_url, headers=headers)

urllib.request.urlopen(req).read()而使用request.urlretrieve库下载时,如下解决:

opener=request.build_opener()

opener.addheaders=[('User-Agent','Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/55.0.2883.75 Safari/537.36')]

request.install_opener(opener)

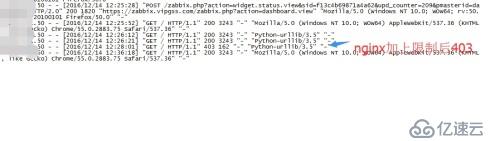

request.urlretrieve(url=url,filename='%s/%s.txt'%(savedir,get_domain_url(url=url)))四、效果图:

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。