您好,登录后才能下订单哦!

pytorch中如何只让指定变量向后传播梯度?

(或者说如何让指定变量不参与后向传播?)

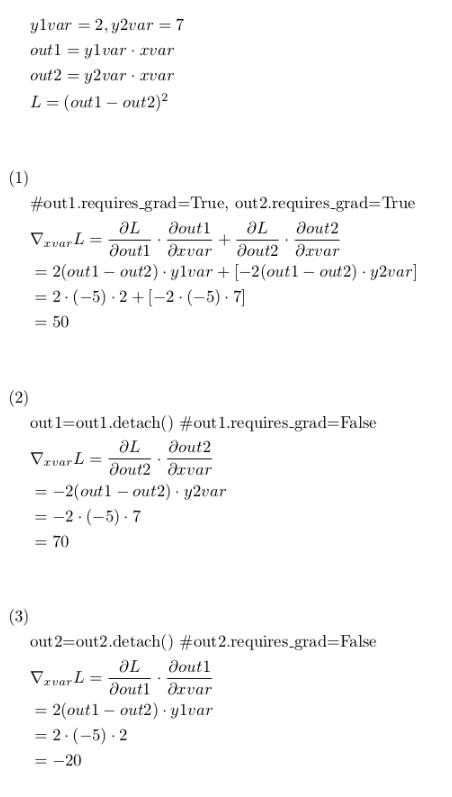

有以下公式,假如要让L对xvar求导:

(1)中,L对xvar的求导将同时计算out1部分和out2部分;

(2)中,L对xvar的求导只计算out2部分,因为out1的requires_grad=False;

(3)中,L对xvar的求导只计算out1部分,因为out2的requires_grad=False;

验证如下:

#!/usr/bin/env python2

# -*- coding: utf-8 -*-

"""

Created on Wed May 23 10:02:04 2018

@author: hy

"""

import torch

from torch.autograd import Variable

print("Pytorch version: {}".format(torch.__version__))

x=torch.Tensor([1])

xvar=Variable(x,requires_grad=True)

y1=torch.Tensor([2])

y2=torch.Tensor([7])

y1var=Variable(y1)

y2var=Variable(y2)

#(1)

print("For (1)")

print("xvar requres_grad: {}".format(xvar.requires_grad))

print("y1var requres_grad: {}".format(y1var.requires_grad))

print("y2var requres_grad: {}".format(y2var.requires_grad))

out1 = xvar*y1var

print("out1 requres_grad: {}".format(out1.requires_grad))

out2 = xvar*y2var

print("out2 requres_grad: {}".format(out2.requires_grad))

L=torch.pow(out1-out2,2)

L.backward()

print("xvar.grad: {}".format(xvar.grad))

xvar.grad.data.zero_()

#(2)

print("For (2)")

print("xvar requres_grad: {}".format(xvar.requires_grad))

print("y1var requres_grad: {}".format(y1var.requires_grad))

print("y2var requres_grad: {}".format(y2var.requires_grad))

out1 = xvar*y1var

print("out1 requres_grad: {}".format(out1.requires_grad))

out2 = xvar*y2var

print("out2 requres_grad: {}".format(out2.requires_grad))

out1 = out1.detach()

print("after out1.detach(), out1 requres_grad: {}".format(out1.requires_grad))

L=torch.pow(out1-out2,2)

L.backward()

print("xvar.grad: {}".format(xvar.grad))

xvar.grad.data.zero_()

#(3)

print("For (3)")

print("xvar requres_grad: {}".format(xvar.requires_grad))

print("y1var requres_grad: {}".format(y1var.requires_grad))

print("y2var requres_grad: {}".format(y2var.requires_grad))

out1 = xvar*y1var

print("out1 requres_grad: {}".format(out1.requires_grad))

out2 = xvar*y2var

print("out2 requres_grad: {}".format(out2.requires_grad))

#out1 = out1.detach()

out2 = out2.detach()

print("after out2.detach(), out2 requres_grad: {}".format(out1.requires_grad))

L=torch.pow(out1-out2,2)

L.backward()

print("xvar.grad: {}".format(xvar.grad))

xvar.grad.data.zero_()

pytorch中,将变量的requires_grad设为False,即可让变量不参与梯度的后向传播;

但是不能直接将out1.requires_grad=False;

其实,Variable类型提供了detach()方法,所返回变量的requires_grad为False。

注意:如果out1和out2的requires_grad都为False的话,那么xvar.grad就出错了,因为梯度没有传到xvar

补充:

volatile=True表示这个变量不计算梯度, 参考:Volatile is recommended for purely inference mode, when you're sure you won't be even calling .backward(). It's more efficient than any other autograd setting - it will use the absolute minimal amount of memory to evaluate the model. volatile also determines that requires_grad is False.

以上这篇在pytorch中实现只让指定变量向后传播梯度就是小编分享给大家的全部内容了,希望能给大家一个参考,也希望大家多多支持亿速云。

免责声明:本站发布的内容(图片、视频和文字)以原创、转载和分享为主,文章观点不代表本网站立场,如果涉及侵权请联系站长邮箱:is@yisu.com进行举报,并提供相关证据,一经查实,将立刻删除涉嫌侵权内容。